#programming-uncertainty

#programming-uncertainty

[ follow ]

#software-development #ai #software-engineering #ai-tools #ai-generated-code #github #productivity #agentic-ai

Information security

fromTNW | Next-Featured

1 day agoLovable security crisis: 48 days of exposed projects, closed bug reports, & the structural failure of vibe coding security

Lovable's security incidents expose vulnerabilities in AI-generated code and highlight a market focus on growth over security.

fromSecurityWeek

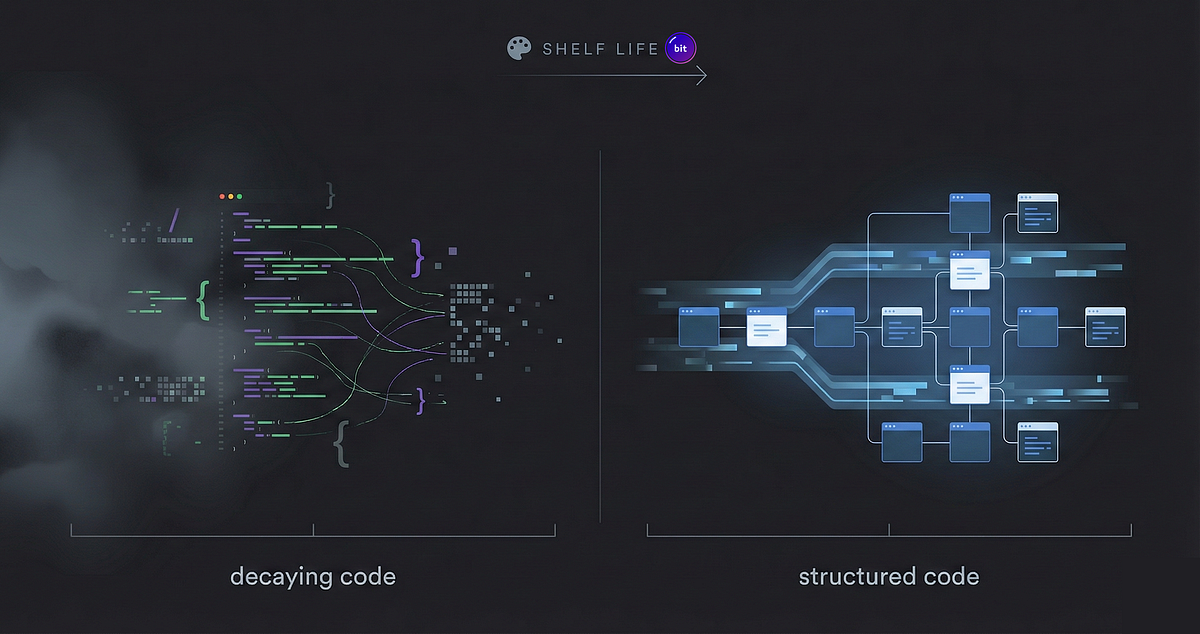

2 months agoHow to Eliminate the Technical Debt of Insecure AI-Assisted Software Development

This extends to the software development community, which is seeing a near-ubiquitous presence of AI-coding assistants as teams face pressures to generate more output in less time. While the huge spike in efficiencies greatly helps them, these teams too often fail to incorporate adequate safety controls and practices into AI deployments. The resulting risks leave their organizations exposed, and developers will struggle to backtrack in tracing and identifying where - and how - a security gap occurred.

Artificial intelligence

fromMedium

1 month agoAI writes the code and humans still write the rules

A new generation of tools that let anyone - designers, marketers, founders, students - describe an app in plain English and watch it get built in real time. No compiler knowledge. No debugging in terminals. No Stack Overflow. Just a conversation with a machine that builds things.

Artificial intelligence

fromInfoWorld

2 months agoAI will not save developer productivity

The software industry is collectively hallucinating a familiar fantasy. We visited versions of it in the 2000s with offshoring and again in the 2010s with microservices. Each time, the dream was identical: a silver bullet for developer productivity, a lever managers can pull to make delivery faster, cheaper, and better. Today, that lever is generative AI, and the pitch is seductively simple: If shipping is bottlenecked by writing code, and large language models can write code instantly, then using an LLM means velocity should explode.

Artificial intelligence

fromInfoWorld

2 months agoSix reasons to use coding agents

One thing I always do when I prompt a coding agent is to tell it to ask me any questions that it might have about what I've asked it to do. (I need to add this to my default system prompt...) And, holy mackerel, if it doesn't ask good questions. It almost always asks me things that I should have thought of myself.

Software development

Software development

fromDevOps.com

1 month agoWhen AI Gets It Wrong: The Insecure Defaults Lurking in Your Code - DevOps.com

Generative AI accelerates code development but introduces security vulnerabilities because AI models learn insecure patterns from training data rather than understanding security principles.

[ Load more ]