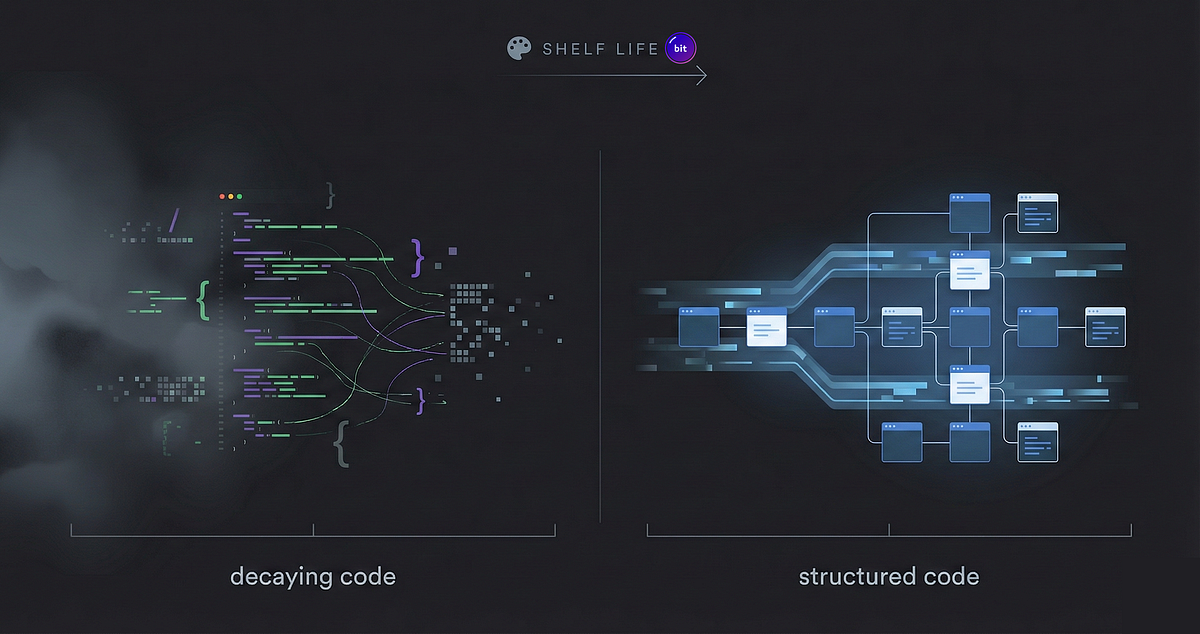

AI-generated code quality depends on the codebase structure, not the model. Code that lands without explicit boundaries, declared dependencies, and clear contracts becomes a black box. Black-box code is readable but not changeable: reading it does not give enough information to modify it safely. Common failure modes include modules that mix responsibilities, implicit runtime connections between services, missing function contracts, and documentation that states the obvious rather than the why and the failure modes. These issues compound across a codebase, causing increasing friction and often leading to large rewrites.

"We build production platforms with AI every day, and we work with teams doing the same with their own stack -Cursor, Claude Code, Copilot. The difference shows up fast. By day two, some codebases are already harder to change than they were yesterday. Others keep getting easier. The difference is never the model. It's what the code lands in. The teams we work with that hit a wall? It's always the same story."

"Not code you can't read. Code where reading it doesn't give you enough to change it. We see the same failure modes over and over: No boundaries. A notification system that handles email, SMS, push, and webhooks in one module. Everything touches everything. You want to swap the email provider? Good luck - it shares state with SMS logic, and that relationship isn't declared anywhere."

Read at Medium

Unable to calculate read time

Collection

[

|

...

]