#uptime

#uptime

[ follow ]

#observability #devops #incident-response #cloud-computing #distributed-systems #automation #incident-management

Web design

fromLondon Business News | Londonlovesbusiness.com

1 week agoSeven leading hosting providers for small businesses that need reliable performance - London Business News | Londonlovesbusiness.com

Small business owners should prioritize uptime, speed, and support quality when choosing a hosting provider.

Web frameworks

fromMedium

1 month agoWhy Most Spring Boot Apps Fail in Production (7 Critical Mistakes)

Spring Boot production failures stem from seven critical mistakes including improper dependency injection, configuration errors, and resource management issues that developers can systematically avoid.

Information security

fromComputerworld

1 month agoStorage vendor offers a real guarantee - but check out those fine-print exceptions

Tech vendors frequently offer performance guarantees with substantial financial penalties, but hidden exceptions in EULAs often make claims difficult or impossible to collect.

Miscellaneous

fromDevOps.com

1 month agoI Learned Traffic Optimization Before I Learned Cloud Computing. It Turns Out the Lessons Were the Same. - DevOps.com

Cloud infrastructure requires understanding system behavior and costs to operate effectively at speed, similar to how skilled drivers anticipate conditions rather than simply driving fast.

Information security

fromThe Hacker News

2 months agoDevOps & SaaS Downtime: The High (and Hidden) Costs for Cloud-First Businesses

Relying solely on public cloud and DevOps SaaS platforms increases operational risk as outages, attacks, and Shared Responsibility gaps drive rising downtime and service degradation.

fromDevOps.com

1 month agoWhat to do About AI's Forced Rethink of Reliability in Modern DevOps - DevOps.com

For years, reliability discussions have focused on uptime and whether a service met its internal SLO. However, as systems become more distributed, reliant on complex internet stacks, and integrated with AI, this binary perspective is no longer sufficient. Reliability now encompasses digital experience, speed, and business impact. For the second year in a row, The SRE Report highlights this shift.

Software development

fromInfoWorld

2 months agoThe private cloud returns, for AI workloads

A North American manufacturer spent most of 2024 and early 2025 doing what many innovative enterprises did: aggressively standardizing on the public cloud by using data lakes, analytics, CI/CD, and even a good chunk of ERP integration. The board liked the narrative because it sounded like simplification, and simplification sounded like savings. Then generative AI arrived, not as a lab toy but as a mandate. "Put copilots everywhere," leadership said. "Start with maintenance, then procurement, then the call center, then engineering change orders."

Artificial intelligence

fromNew Relic

3 months agoTraditional Network Monitoring is Failing

For any IT department, these four words are the beginning of a familiar, often frustrating, journey. In our modern world, where business success is built on distributed applications and hybrid cloud architectures, the network is the circulatory system. When it fails, everything grinds to a halt. Yet, despite its critical importance, it often remains a black box-a source of blame that is difficult to prove or disprove.

Information security

fromTheregister

1 month agoServer crashes traced to one very literal knee-jerk reaction

It was the time of Novell networks, RG58 cables, and bulky tower PCs. It was also a time before the telemarketer's IT department employed specialists. Carter and his two colleagues - boss Mike and part-time student Stefan - therefore handled tasks ranging from programming to support, and everything in between.

Software development

Software development

fromDbmaestro

4 years agoIf You Don't Have Database Delivery Automation, Brace Yourself for These 10 Problems |

Manual database processes break DevOps pipelines; only 12% deploy database changes daily, causing configuration drift, frequent errors, slower time-to-market, and reduced productivity.

fromTechRepublic

2 months agoWhat Are the Pros and Cons of Data Centers?

When ChatGPT launched in late 2022, I watched something remarkable happen. Within two months, it hit 100 million users, a growth rate that sent shockwaves through Silicon Valley. Today, it has over 800 million weekly active users. That launch sparked an explosion in AI development that has fundamentally changed how we build and operate the infrastructure powering our digital world.

Artificial intelligence

fromDbmaestro

4 years agoWhat is Database Delivery Automation and Why Do You Need It?

Manual database deployment means longer release times. Database specialists have to spend several working days prior to release writing and testing scripts which in itself leads to prolonged deployment cycles and less time for testing. As a result, applications are not released on time and customers are not receiving the latest updates and bug fixes. Manual work inevitably results in errors, which cause problems and bottlenecks.

Software development

DevOps

fromLondon Business News | Londonlovesbusiness.com

1 month agoSigns it's time to move to dedicated server hosting - London Business News | Londonlovesbusiness.com

Dedicated server hosting becomes necessary when traffic surges cause performance degradation, complex database operations require absolute resource isolation, and security demands exceed virtual environment capabilities.

fromDevOps.com

1 month agoZero Downtime Multicloud Migrations for Observability Control Planes - DevOps.com

An observability control plane isn't just a dashboard. It's the operational authority system. It defines alert rules, routing, ownership, escalation policy, and notification endpoints. When that layer is wrong, the impact is immediate. The wrong team gets paged. The right team never hears about the incident. Your service level indicators look clean while production burns.

DevOps

Software development

fromInfoQ

1 month agoKubernetes Introduces Node Readiness Controller to Improve Pod Scheduling Reliability

Kubernetes introduces the Node Readiness Controller to improve scheduling accuracy by synchronizing the API server's node readiness view with actual kubelet health signals, reducing pod scheduling onto unavailable nodes.

DevOps

fromNew Relic

1 month agoeBPF Network Metrics for Kernel-Level Observability | New Relic

New Relic's eBPF-based agent unifies network performance, APM telemetry, infrastructure metrics, and logging into a single lightweight solution, eliminating network blind spots and reducing mean time to innocence during incidents.

DevOps

fromDevOps.com

1 month agoOn-Call Rotation Best Practices: Reducing Burnout and Improving Response - DevOps.com

On-call duty is critical for system protection but often mismanaged, causing engineer burnout and attrition when rotations are poorly designed, alerts are excessive, and automation is lacking.

fromDevOps.com

1 month agoHarness Readies Resilience Testing Platform to Make Applications More Robust - DevOps.com

The Harness Resilience Testing platform extends the scope of the tests provided to include application load and disaster recovery (DR) testing tools that will enable DevOps teams to further streamline workflows.

DevOps

DevOps

fromSitePoint Forums | Web Development & Design Community

2 months agoWhat is the best way to differentiate between performance testing and a true reliability test system?

Prioritize fault tolerance before resource optimization; automate long-term reliability tests with staged, parallel, and targeted strategies to preserve CI/CD velocity.

fromDbmaestro

5 years agoDatabase Delivery Automation in the Multi-Cloud World

The main advantage of going the Multi-Cloud way is that organizations can "put their eggs in different baskets" and be more versatile in their approach to how they do things. For example, they can mix it up and opt for a cloud-based Platform-as-a-Service (PaaS) solution when it comes to the database, while going the Software-as-a-Service (SaaS) route for their application endeavors.

DevOps

fromInfoWorld

2 months agoThe 'Super Bowl' standard: Architecting distributed systems for massive concurrency

When I manage infrastructure for major events (whether it is the Olympics, a Premier League match or a season finale) I am dealing with a "thundering herd" problem that few systems ever face. Millions of users log in, browse and hit "play" within the same three-minute window. But this challenge isn't unique to media. It is the same nightmare that keeps e-commerce CTOs awake before Black Friday or financial systems architects up during a market crash. The fundamental problem is always the same: How do you survive when demand exceeds capacity by an order of magnitude?

DevOps

fromNew Relic

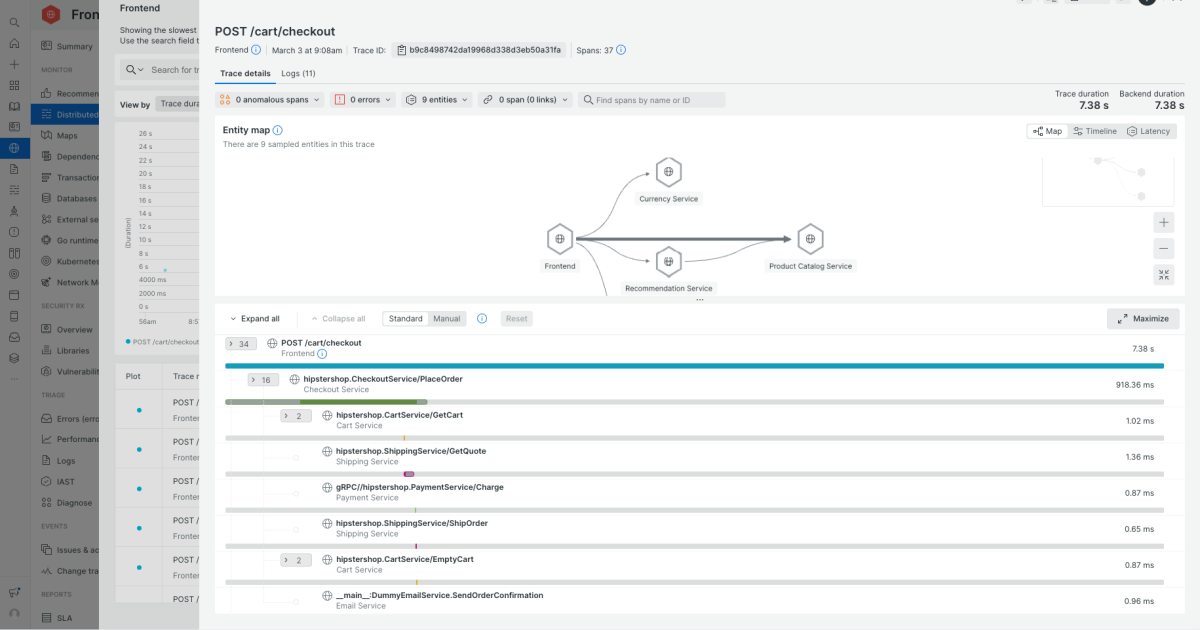

2 months ago5 Best Application Performance Monitoring Tools to Consider in 2026

Support for distributed systems. Check how well the tool handles microservices, serverless, and Kubernetes. Can you follow a request across services, queues, and third-party APIs? Does it understand pods, nodes, clusters, and autoscaling events, or does it treat everything like a static host? Correlation across metrics, logs, and traces. In an incident, you shouldn't be copying IDs between tools. Look for the ability to pivot directly from a slow trace to relevant logs,

DevOps

[ Load more ]