#ai-rack-scale

#ai-rack-scale

[ follow ]

#cloud-computing #ai-infrastructure #data-centers #ai #hyperscalers #aws #ai-agents #kubernetes #security #devops

Data science

fromTechRepublic

1 month agoInside the Gas Engine Strategy Powering AI's Next Wave

Gas reciprocating engines are emerging as a critical power solution for AI data centers, with manufacturers like Caterpillar securing multi-gigawatt orders to meet demand that exceeds grid and turbine capacity.

fromInfoWorld

2 months agoThe private cloud returns, for AI workloads

A North American manufacturer spent most of 2024 and early 2025 doing what many innovative enterprises did: aggressively standardizing on the public cloud by using data lakes, analytics, CI/CD, and even a good chunk of ERP integration. The board liked the narrative because it sounded like simplification, and simplification sounded like savings. Then generative AI arrived, not as a lab toy but as a mandate. "Put copilots everywhere," leadership said. "Start with maintenance, then procurement, then the call center, then engineering change orders."

Artificial intelligence

fromDevOps.com

1 month agoZero Downtime Multicloud Migrations for Observability Control Planes - DevOps.com

An observability control plane isn't just a dashboard. It's the operational authority system. It defines alert rules, routing, ownership, escalation policy, and notification endpoints. When that layer is wrong, the impact is immediate. The wrong team gets paged. The right team never hears about the incident. Your service level indicators look clean while production burns.

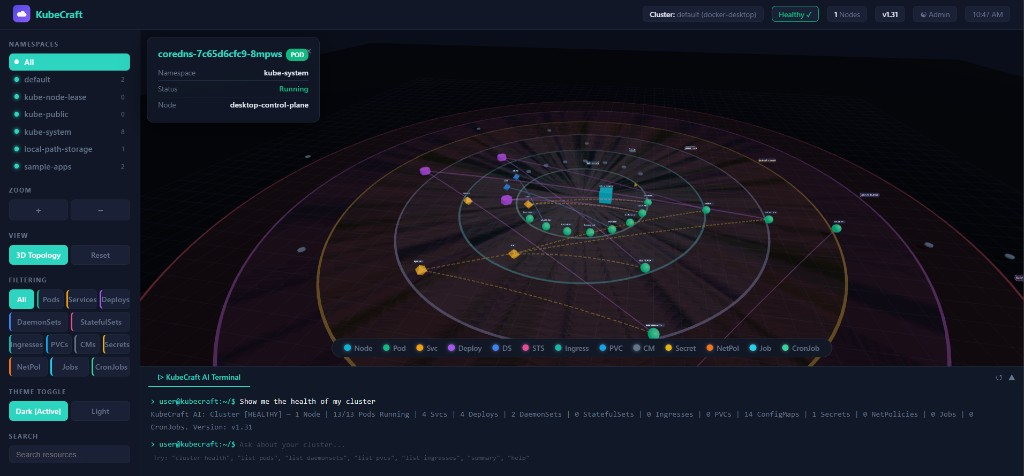

DevOps

fromComputerworld

2 months agoIntel sets sights on data center GPUs amid AI-driven infrastructure shifts

Intel is making a new push into GPUs, this time with a focus on data center workloads, as the chipmaker looks to reestablish itself in a market increasingly shaped by AI-driven demand and dominated by Nvidia. CEO Lip-Bu Tan said that after hiring a senior GPU architect, the company is working directly with customers to define requirements, signaling a more demand-driven approach as enterprises and cloud providers weigh their options for accelerated computing, according to a Reuters report.

Artificial intelligence

fromDbmaestro

5 years agoDatabase Delivery Automation in the Multi-Cloud World

The main advantage of going the Multi-Cloud way is that organizations can "put their eggs in different baskets" and be more versatile in their approach to how they do things. For example, they can mix it up and opt for a cloud-based Platform-as-a-Service (PaaS) solution when it comes to the database, while going the Software-as-a-Service (SaaS) route for their application endeavors.

DevOps

[ Load more ]