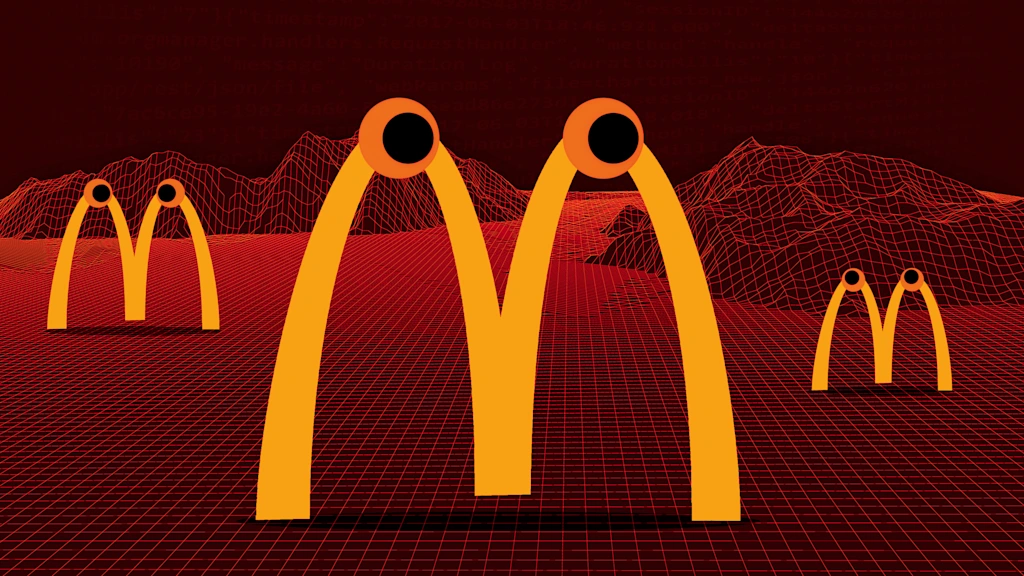

"Users have been hijacking AI-powered customer service bots, compelling them to perform unauthorized tasks like providing extraordinary product deals and assisting in legally questionable actions."

"Viral posts claimed that McDonald's AI customer support agent could debug complex Python programming code, gaining massive attention with 1.6 million views and 30,000 likes."

"An internal investigation found no evidence of the exploit, and the circulating screenshots and videos are believed to be fraudulent, similar to past incidents with Chipotle's bot."

"The technical vulnerability described, known as prompt injection, is real and poses genuine risks when companies deploy AI models with programmed system prompts."

A recent trend involves users manipulating AI-powered customer service bots to perform tasks outside their intended functions, such as debugging code. Viral posts claimed that McDonald's AI assistant could handle complex programming, leading to widespread attention. However, an internal investigation found no evidence supporting these claims, suggesting the posts were fraudulent. Similar incidents occurred with Chipotle's bot, which was also falsely reported to have coding capabilities. Despite the lack of evidence, the potential for prompt injection vulnerabilities in AI systems remains a significant concern.

Read at Fast Company

Unable to calculate read time

Collection

[

|

...

]