#output-index

#output-index

[ follow ]

#ai #postgresql #open-source #ai-infrastructure #caching #performance #data-governance #oracle #software-engineering

fromTheregister

4 days agoDuckDB uses RDBMS to tackle lakehouse 'small changes' issue

You make a small change to your table, adding a single row, and it affects data lake performance because, due to the way they work, a new file has to be written that contains one row, and then a bunch of metadata has to be written. This is very inefficient, because formats like Parquet really don't want to store a single row, they want to store a million rows.

Data science

#ai-infrastructure

Business intelligence

fromInfoWorld

1 month agoWhy Postgres has won as the de facto database: Today and for the agentic future

Leading enterprises achieve 5x ROI by adopting open source databases like PostgreSQL to unify structured and unstructured data for agentic AI, with 81% of successful enterprises committed to open source strategies.

Business intelligence

fromInfoWorld

1 month agoSnowflake's new 'autonomous' AI layer aims to do the work, not just answer questions

Project SnowWork is Snowflake's autonomous AI layer that automates data analysis tasks like forecasting, churn analysis, and report generation without requiring data team intervention.

fromInfoWorld

1 month agoMariaDB taps GridGain to keep pace with AI-driven data demands

Hyperscalers and major data platform vendors offer integrated services across storage, analytics, and model infrastructure. MariaDB's differentiation will likely depend on whether the combined platform can deliver operational speed and simplicity that organizations find easier to run than those larger stacks.

Business intelligence

fromSearch Engine Roundtable

2 months agoGoogle & Bing Call Markdown Files Messy & Causes More Crawl Load

What happens when the AI companies (inevitably) encounter spam and attempts at SEO/GEO manipulation in the markdown files targeted to bots? What happens when the .md files no longer provide an equivalent experience to what users are seeing? What happens if they continue crawling those pages but actually toss them out before using the content to form a response? ...And we keep conflating "bot crawling activity" with "the bots are using/liking my markdown content?" How will we know if they're actually using the .md files or not?

Marketing tech

fromRaymondcamden

2 months agoI threw thousands of files at Astro and you won't believe what happened next...

I began by creating a soft link locally from my blog's repo of posts to the src/pages/posts of a new Astro site. My blog currently has 6742 posts (all high quality I assure you). Each one looks like so: --- layout: post title: "Creating Reddit Summaries with URL Context and Gemini" date: "2026-02-09T18:00:00" categories: ["development"] tags: ["python","generative ai"] banner_image: /images/banners/cat_on_papers2.jpg permalink: /2026/02/09/creating-reddit-summaries-with-gemini description: Using Gemini APIs to create a summary of a subreddit. --- Interesting content no one will probably read here...

Austin

Miscellaneous

fromDevOps.com

1 month agoI Learned Traffic Optimization Before I Learned Cloud Computing. It Turns Out the Lessons Were the Same. - DevOps.com

Cloud infrastructure requires understanding system behavior and costs to operate effectively at speed, similar to how skilled drivers anticipate conditions rather than simply driving fast.

Tech industry

fromInfoQ

2 months agoUber Moves from Static Limits to Priority-Aware Load Control for Distributed Storage

Priority-aware, colocated load management with CoDel and per-tenant Scorecard protects stateful multi-tenant databases by prioritizing critical traffic and adapting dynamically to prevent overloads.

Node JS

fromThe NodeSource Blog - Node.js Tutorials, Guides, and Updates

6 months agoIntelligent Observability: How AI is Transforming Node.js Telemetry into Actionable Optimization

N|Sentinel uses AI integrated with N|Solid to detect Node.js anomalies, analyze runtime behavior, and provide real-time actionable insights to prevent performance issues.

fromInfoWorld

2 months agoAI is changing the way we think about databases

Developers have spent the past decade trying to forget databases exist. Not literally, of course. We still store petabytes. But for the average developer, the database became an implementation detail; an essential but staid utility layer we worked hard not to think about. We abstracted it behind object-relational mappers (ORM). We wrapped it in APIs. We stuffed semi-structured objects into columns and told ourselves it was flexible.

Software development

Data science

fromTechRepublic

1 month agoInside the Gas Engine Strategy Powering AI's Next Wave

Gas reciprocating engines are emerging as a critical power solution for AI data centers, with manufacturers like Caterpillar securing multi-gigawatt orders to meet demand that exceeds grid and turbine capacity.

Data science

fromInfoWorld

1 month agoBuyer's guide: Comparing the leading cloud data platforms

Five leading cloud data platforms—Databricks, Snowflake, Amazon RedShift, Google BigQuery, and Microsoft Fabric—offer distinct architectural approaches for enterprise data storage, analytics, and AI workloads.

fromMedium

3 months agoHow I Fixed a Critical Spark Production Performance Issue (and Cut Runtime by 70%)

"The job didn't fail. It just... never finished." That was the worst part. No errors.No stack traces.Just a Spark job running forever in production - blocking downstream pipelines, delaying reports, and waking up-on-call engineers at 2 AM. This is the story of how I diagnosed a real Spark performance issue in production and fixed it drastically, not by adding more machines - but by understanding Spark properly.

Data science

fromInfoQ

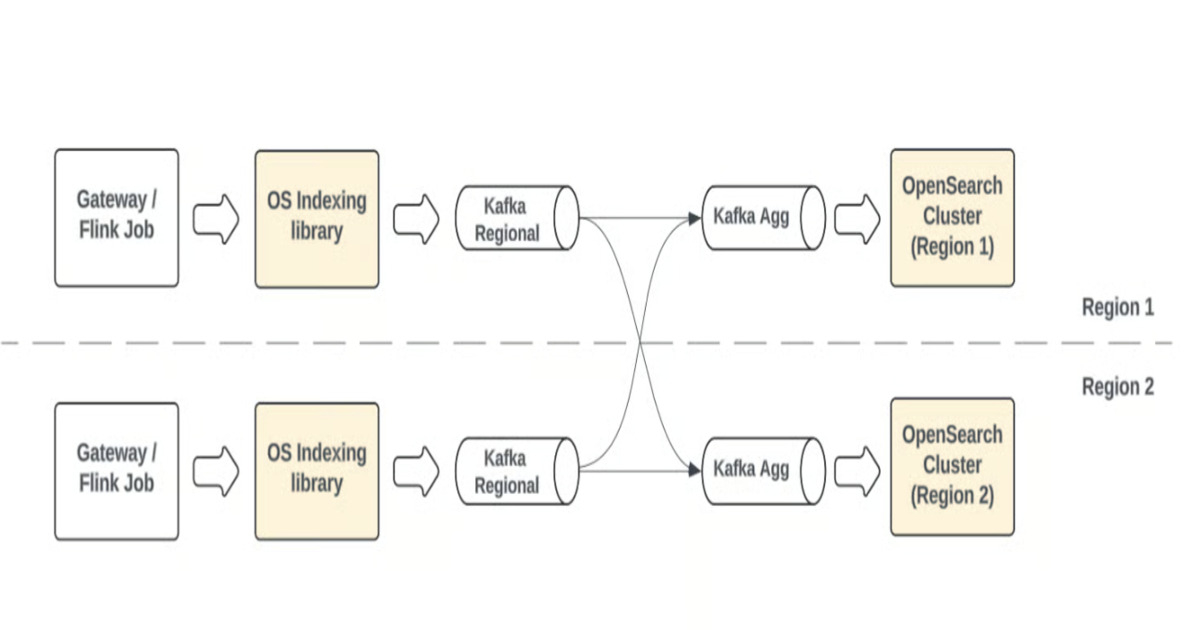

1 month agoPinterest's CDC-Powered Ingestion Slashes Database Latency from 24 Hours to 15 Minutes

Pinterest deployed a next-generation database ingestion framework using CDC, Kafka, Flink, Spark, and Iceberg to reduce data latency from 24+ hours to minutes while processing only changed records.

Artificial intelligence

fromInfoQ

2 months agoAutonomous Big Data Optimization: Multi-Agent Reinforcement Learning to Achieve Self-Tuning Apache Spark

A Q-learning agent autonomously learns and generalizes optimal Spark configurations by discretizing dataset features and combining with Adaptive Query Execution for superior performance.

fromInfoWorld

2 months agoDatabricks adds MemAlign to MLflow to cut cost and latency of LLM evaluation

By replacing repeated fine‑tuning with a dual‑memory system, MemAlign reduces the cost and instability of training LLM judges, offering faster adaptation to new domains and changing business policies. Databricks' Mosaic AI Research team has added a new framework, MemAlign, to MLflow, its managed machine learning and generative AI lifecycle development service. MemAlign is designed to help enterprises lower the cost and latency of training LLM-based judges, in turn making AI evaluation scalable and trustworthy enough for production deployments.

Artificial intelligence

fromInfoQ

2 months agoFirestore Adds Pipeline Operations with Over 100 New Query Features

Google has overhauled Firestore Enterprise edition's query engine, adding Pipeline operations that let developers chain together multiple query stages for complex aggregations, array operations, and regex matching. The update removes Firestore's longstanding query limitations and makes indexes optional, putting the database on par with other major NoSQL platforms. Pipeline operations work through sequential stages that transform data inside the database.

Software development

[ Load more ]