Web development

fromSitePoint Forums | Web Development & Design Community

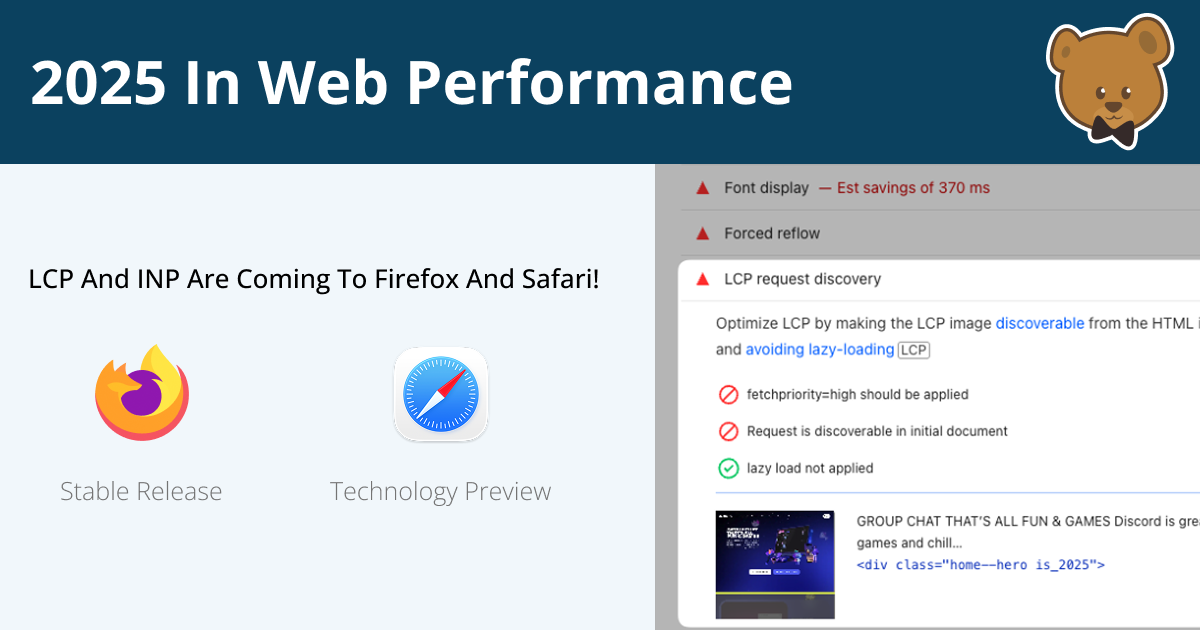

1 month agoHow are you handling YouTube embeds without hurting page speed or Core Web Vitals?

Balancing user experience with performance is crucial when embedding YouTube videos.