Cryptocurrency

fromBitcoin Magazine

3 days agoWhen Quantum Computers Come For Your Bitcoin: What Classical Property Law Says Happens Next

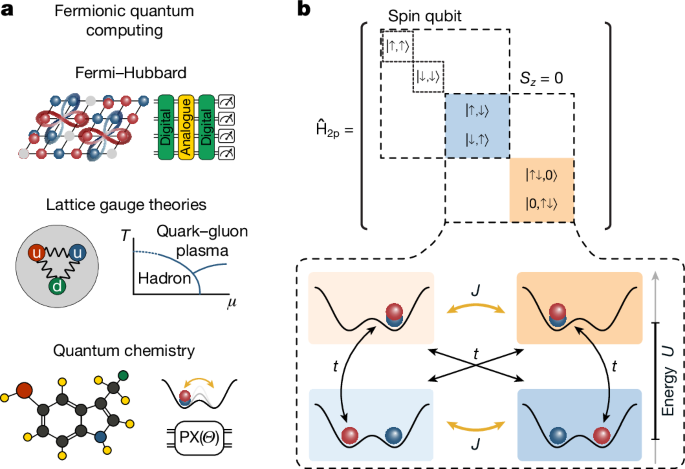

Bitcoin's future is challenged by quantum computing, raising questions about ownership and legality of coins accessed through quantum-derived keys.