"Traditional Transformer models face a critical bottleneck during inference: the key-value (KV) cache grows linearly with sequence length, consuming massive amounts of memory. For a model with 32 attention heads and a hidden dimension of 4096, storing keys and values for a single sequence of 2048 tokens requires over 1GB of memory. DeepSeek's MLA addresses this by introducing a clever compression-decompression mechanism inspired by Low-Rank Adaptation (LoRA)."

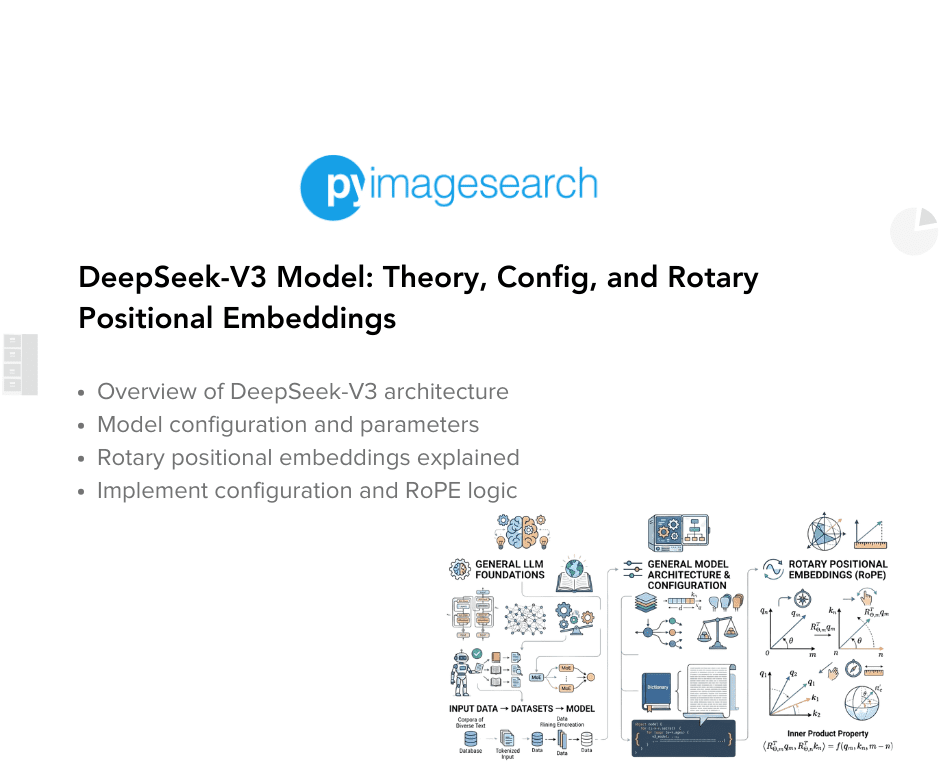

"DeepSeek-V3 model represents a significant milestone in this evolution, introducing a suite of cutting-edge techniques that address some of the most pressing challenges in modern language model development: memory efficiency during inference, computational cost during training, and effective capture of long-range dependencies."

DeepSeek-V3 represents a significant advancement in large language model development, tackling three major challenges: memory efficiency during inference, computational cost during training, and effective capture of long-range dependencies. The model introduces cutting-edge techniques including Multihead Latent Attention (MLA), which addresses a critical bottleneck in traditional Transformer models. The KV cache in standard models grows linearly with sequence length, consuming enormous memory—over 1GB for a single 2048-token sequence with 32 attention heads. MLA solves this through a compression-decompression mechanism inspired by Low-Rank Adaptation, achieving up to 75% reduction in KV cache memory while preserving model quality. This practical improvement enables serving more concurrent users and processing longer contexts with existing computational resources.

#large-language-models #multihead-latent-attention #memory-optimization #transformer-architecture #inference-efficiency

Read at PyImageSearch

Unable to calculate read time

Collection

[

|

...

]