Python

fromPyImageSearch

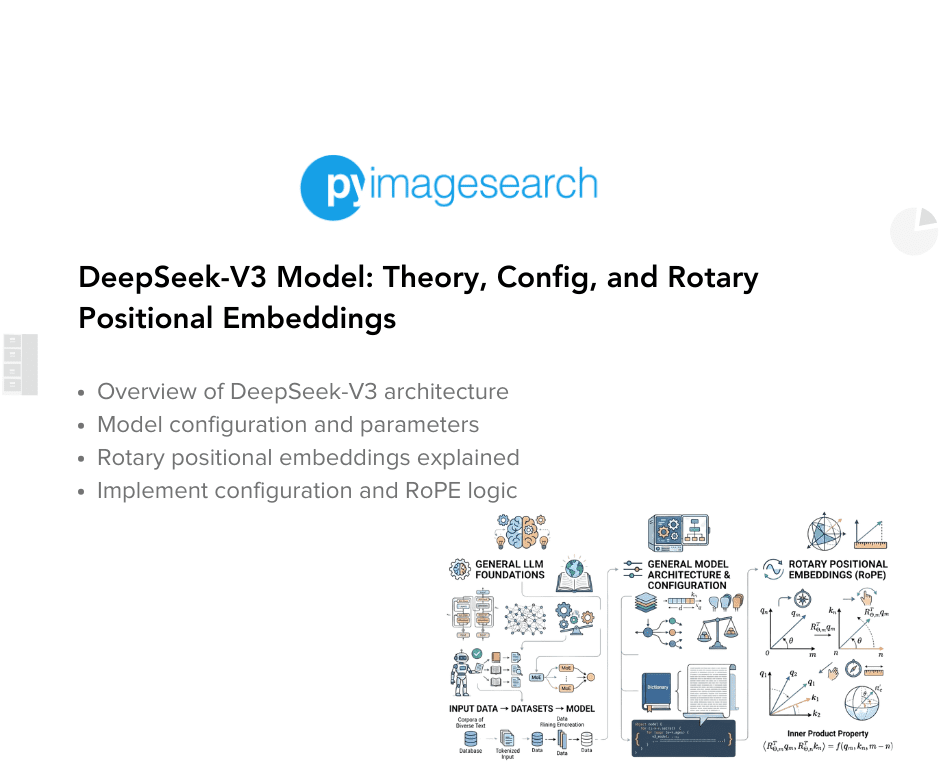

1 month agoBuild DeepSeek-V3: Multi-Head Latent Attention (MLA) Architecture - PyImageSearch

Multi-Head Latent Attention (MLA) reduces computational and memory costs of traditional attention mechanisms by introducing a latent representation space while preserving contextual understanding.