"Artificial Intelligence ("AI") and generative AI such as ChatGPT necessitate the need for substantial memory and storage capabilities for the storage of extensive datasets, training intricate models, and handling vast volumes of data processing."

"In a data center, GPUs are increasingly used for accelerating complex computations, such as machine learning, deep learning, and scientific simulations. But the storage of these extensive datasets is done through Hard Disk Drives (HDDs) and Solid State Drives (SSDs). HDDs and SSDs store the large datasets required for training generative AI models."

"HDDs provide high-capacity storage at a lower cost, while SSDs offer faster data access speeds. Data Retrieval: Fast data retrieval from SSDs significantly reduces the time it takes to load training data into memory for processing. This minimizes training bottlenecks caused by slow data access."

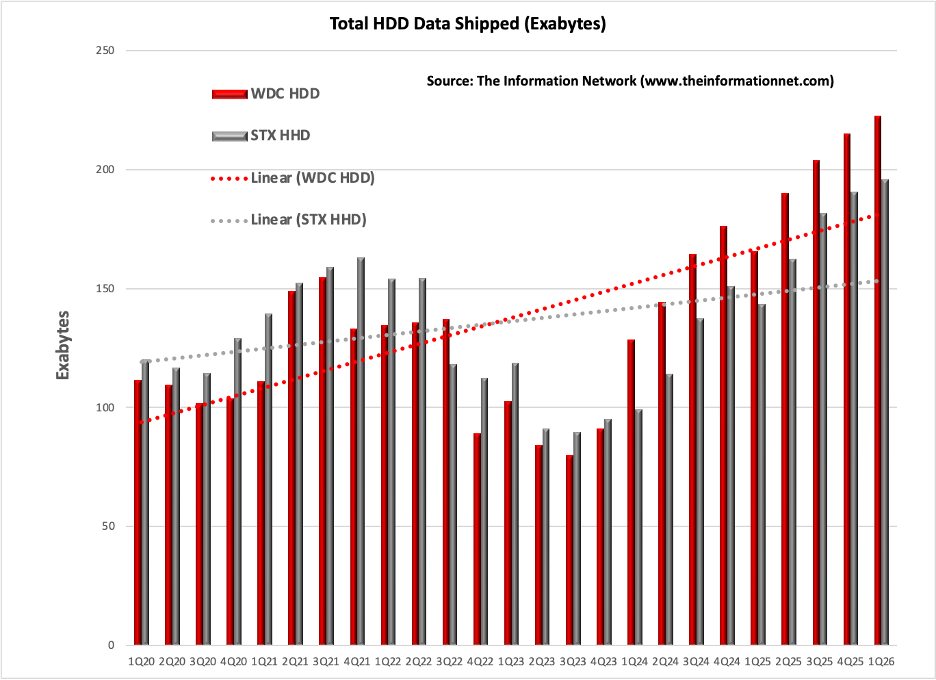

"In other words, while GPUs have become the computational engine of AI, the enormous amount of data being created by AI applications is increasingly driving demand for high-capacity storage. That trend is now becoming obvious not only in hyperscaler data centers, but increasingly in pricing, margins, and equity performance across the storage industry."

Artificial intelligence and generative AI require substantial memory and storage to hold extensive datasets, train complex models, and process large volumes of data. Nvidia GPUs serve as the computational engine for machine learning, deep learning, and scientific simulations, while HDDs and SSDs provide the storage for training datasets. HDDs offer high-capacity storage at lower cost, and SSDs provide faster data access. Fast retrieval from SSDs reduces the time needed to load training data into memory, minimizing training delays caused by slow access. As AI applications generate enormous data volumes, demand for high-capacity storage increases across hyperscaler data centers and the storage industry’s pricing, margins, and equity performance.

Read at 24/7 Wall St.

Unable to calculate read time

Collection

[

|

...

]