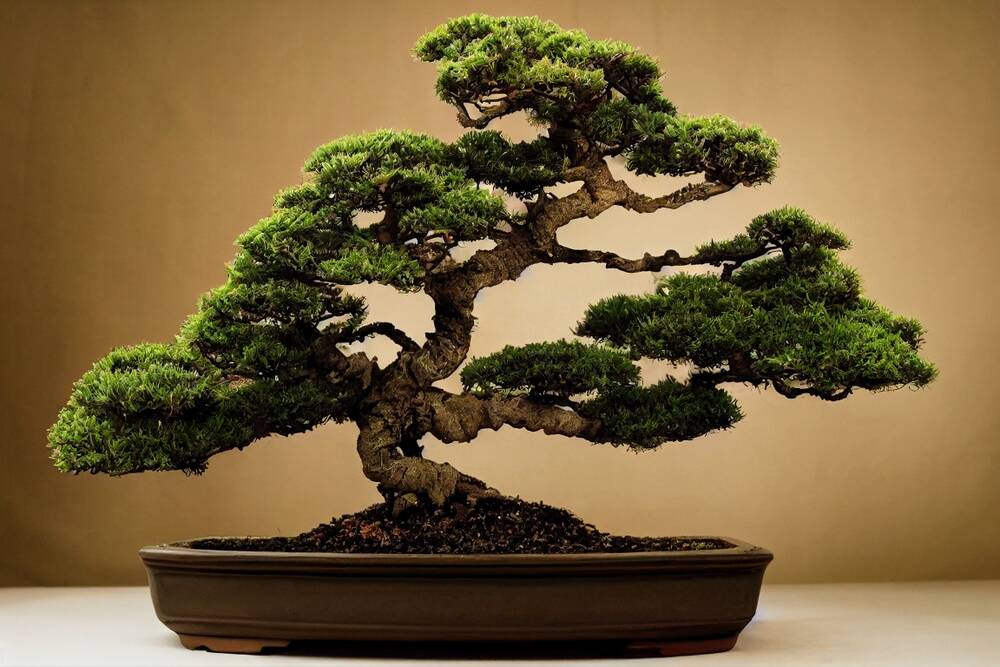

""Our first proof point is 1-bit Bonsai 8B, a 1-bit model that fits into 1.15 GB of memory and delivers over 10x the intelligence density of its full-precision counterparts.""

""It is 14x smaller, 8x faster, and 5x more energy efficient on edge hardware while remaining competitive with other models in its parameter-class.""

""PrismML's Bonsai model family is based on an architecture where each weight is represented only by its sign, {−1, +1}, while a shared scale factor is stored for each group of weights.""

PrismML has introduced Bonsai 8B, a 1-bit large language model that excels in performance while being significantly smaller and faster than traditional models. It requires only 1.15 GB of memory and offers over 10 times the intelligence density compared to full-precision models. Bonsai 8B is 14 times smaller, 8 times faster, and 5 times more energy efficient on edge hardware. The model's architecture uses a unique quantization method, representing weights by their sign, which contributes to its efficiency and competitive performance.

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]