"Frontier AI systems are simply not reliable enough to operate without human oversight in high-stakes physical environments. The Pentagon's demand was, in structural terms, a demand to eliminate the human's ability to redirect, halt, or override the system. Amodei's refusal was an insistence on maintaining State-Space Reversibility - the architectural commitment to keeping the human in the loop precisely because the system lacks the functional grounding to be trusted outside it."

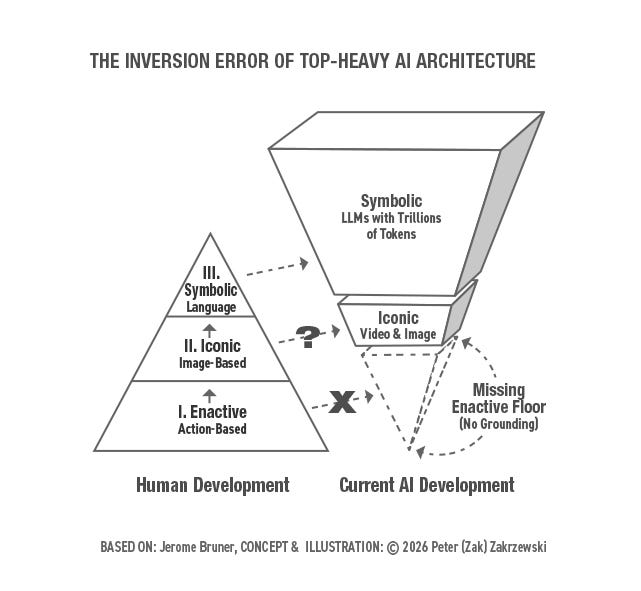

"AI researchers and others concerned with AI development keep asking why Large Language Models (LLM) hallucinate dangerously. I think we are all asking the wrong question. The hallucination problem is a symptom. The real problem is structural - we built the peak of synthetic cognition without the base. I think of it as the Inversion Error."

In March 2026, Anthropic CEO Dario Amodei rejected Pentagon demands to remove Claude's safeguards, arguing that frontier AI systems are structurally unreliable for autonomous operation in physical environments without human oversight. This decision reflects the principle of State-Space Reversibility—maintaining human ability to redirect, halt, or override AI systems. The core issue underlying AI safety concerns is the Inversion Error: LLMs were developed without adequate functional grounding in embodied cognition and real-world constraints. Hallucinations represent symptoms of this deeper structural problem. A design scholar's sustained interaction with AI systems revealed that current synthetic cognition lacks the foundational embodied understanding necessary for independent operation in high-stakes scenarios.

Read at Medium

Unable to calculate read time

Collection

[

|

...

]