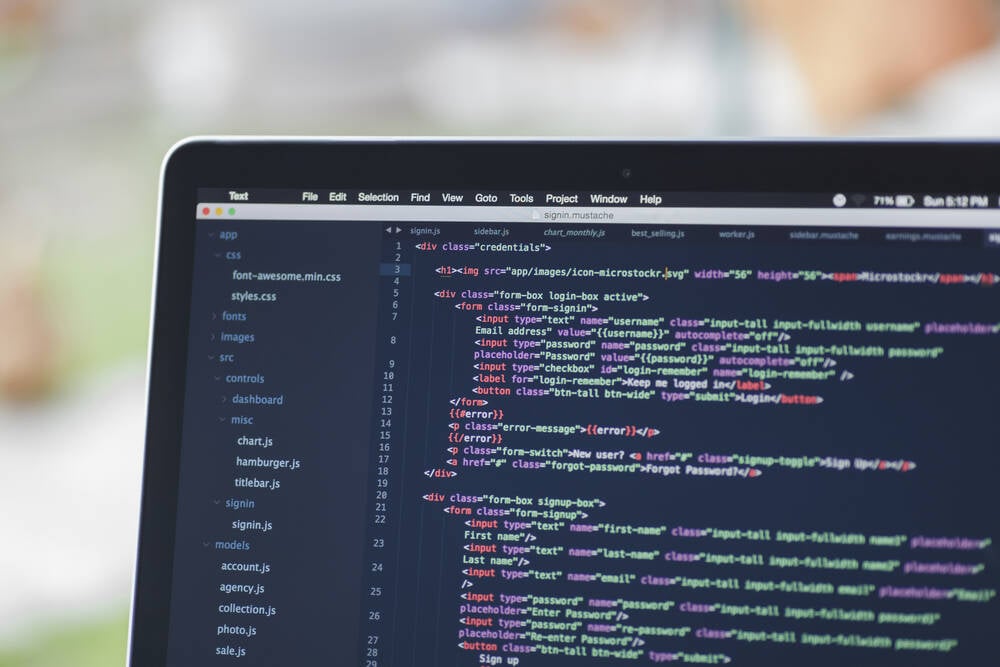

Rising costs and aggressive rate limits from major AI providers are prompting developers to consider local models for coding tasks. Alibaba's Qwen3.6-27B is highlighted as a viable option, capable of running on standard hardware. Improvements in model architectures and capabilities have made smaller models more competitive, allowing them to perform complex tasks effectively. This shift towards local models could provide significant cost savings while still delivering essential coding functionalities.

"With model devs pushing more aggressive rate limits, raising prices, or even abandoning subscriptions for usage-based pricing, that vibe-coded hobby project is about to get a whole lot more expensive."

"Alibaba recently dropped Qwen3.6-27B, which the cloud and e-commerce giant boasts packs 'flagship coding power' into a package small enough to run on a 32 GB M-series Mac or 24 GB GPU."

"Reasoning capabilities allow small models to make up for their size by 'thinking' for longer, mixture-of-experts models mean you don't need terabytes a second of memory bandwidth for an interactive experience."

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]