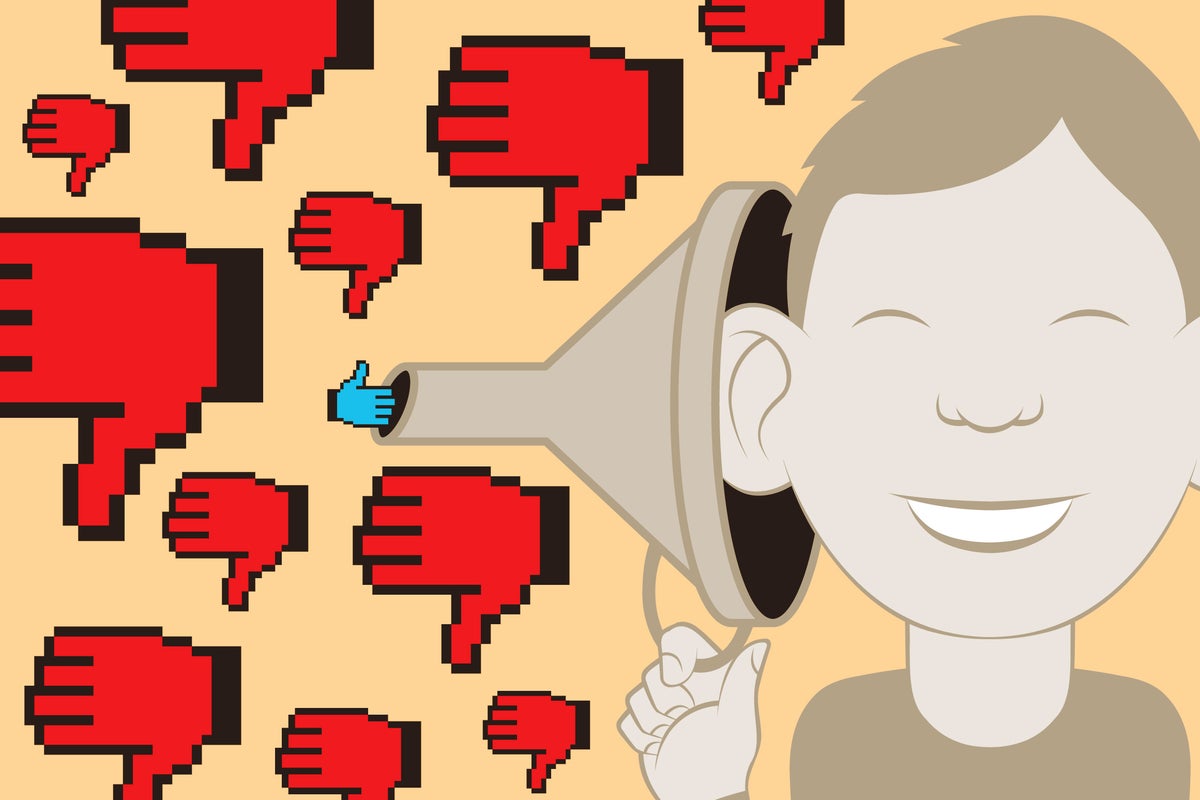

"People's views are becoming more and more polarized, with echo chamberssocial bubbles that reinforce existing beliefsexacerbating differences in opinion. This divergence doesn't just apply to political opinions; it also touches on factual topics, from climate change to vaccination. And social media is not the sole culprit, according to a recent study published in the Proceedings of the National Academy of Sciences USA."

"Online participants were asked to rate their beliefs on six topics, including nuclear energy and caffeine's health effects. They then chose search terms to learn more about each topic. The researchers rated the terms' scope and found that between 9 and 34 percent (depending on the topic) were narrow. For example, when researching the health effects of caffeine, one participant used caffeine negative effects, whereas others used benefits of caffeine."

"These narrow terms tended to align with participants' existing beliefs, and generally less than 10 percent did this knowingly. People often pick search terms that reflect what they believe, without realizing it, says Eugina Leung of Tulane University's business school, who led the study. Search algorithms are designed to give the most relevant answers for whatever we type, which ends up reinforcing what we already thought."

Participants rated beliefs on six topics, including nuclear energy and caffeine's health effects, then selected search terms to learn more. Researchers categorized the terms by scope and found that 9–34% of terms were narrow depending on topic. Narrow search terms tended to align with participants' prior beliefs, and fewer than 10% of participants recognized that alignment. Examples include one person searching 'caffeine negative effects' while others searched 'benefits of caffeine.' Search engines and AI-assisted tools like ChatGPT and Bing returned results that reinforced the intent of the search terms. A proposed algorithmic tweak could surface a broader range of perspectives.

Read at www.scientificamerican.com

Unable to calculate read time

Collection

[

|

...

]