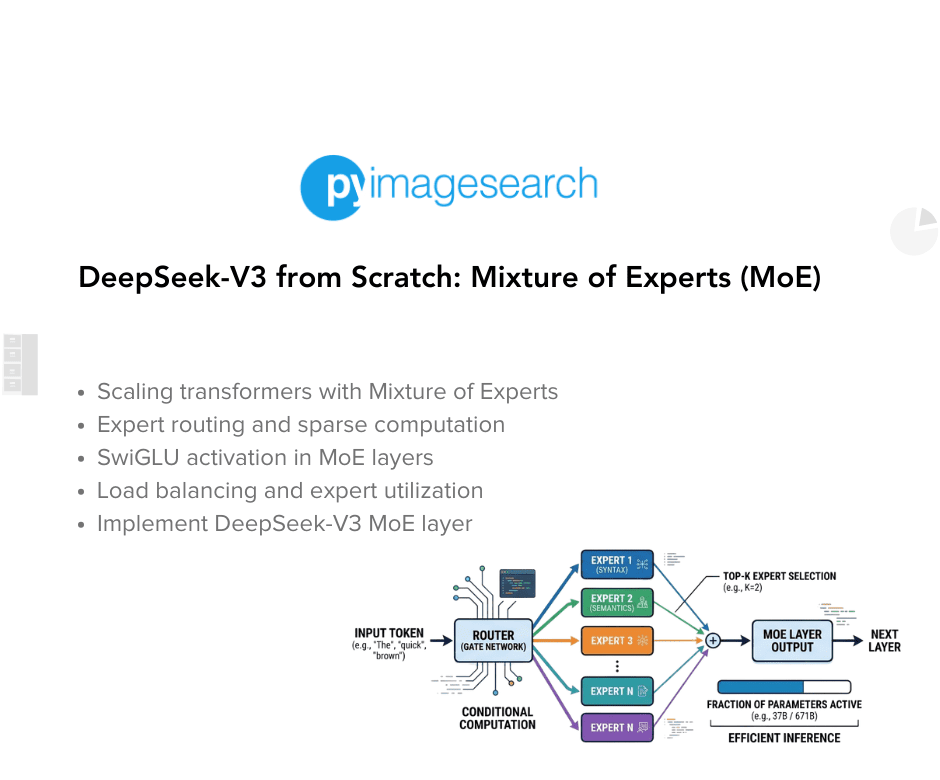

Mixture of Experts (MoE) allows DeepSeek-V3 to scale model capacity dynamically while maintaining computational efficiency. By selectively routing tokens through specialized expert networks, the model expands its representational power without activating all parameters for every input. This approach addresses the tradeoff between model size and training costs. The implementation of MoE is part of a broader effort to reconstruct DeepSeek-V3, integrating various innovations like RoPE and MLA into a cohesive architecture that balances scale, efficiency, and performance.

"MoE introduces a dynamic way of scaling model capacity without proportionally increasing computational cost. Instead of activating every parameter for every input, the model selectively routes tokens through specialized 'expert' networks."

"This installment continues our broader goal of reconstructing DeepSeek-V3 from scratch - showing how each innovation, from RoPE to MLA to MoE, fits together into a cohesive architecture that balances scale, efficiency, and performance."

Read at PyImageSearch

Unable to calculate read time

Collection

[

|

...

]