"In recent months, wrongful death and negligence lawsuits have been filed against OpenAI, the maker of ChatGPT, and Character.AI by parents of children who have died by suicide. In the case of one teen, parents allege that the chatbot functioned as a "suicide coach," failing to intervene when he expressed harmful intentions after months of confiding in it. The company has reportedly argued that the teen misused the platform and violated its terms of use."

"A recent survey from Common Sense Media found that 72% of teen respondents reported using companion AI, and over half (52%) identified as regular users, interacting at least a few times per month. Nearly one-third of teens said conversations with AI were as satisfying as, or more satisfying than, conversations with humans. Younger users (ages 13-14) were more likely than older teens (ages 15-17) to trust content from a companion AI (27% vs. 20%, respectively)."

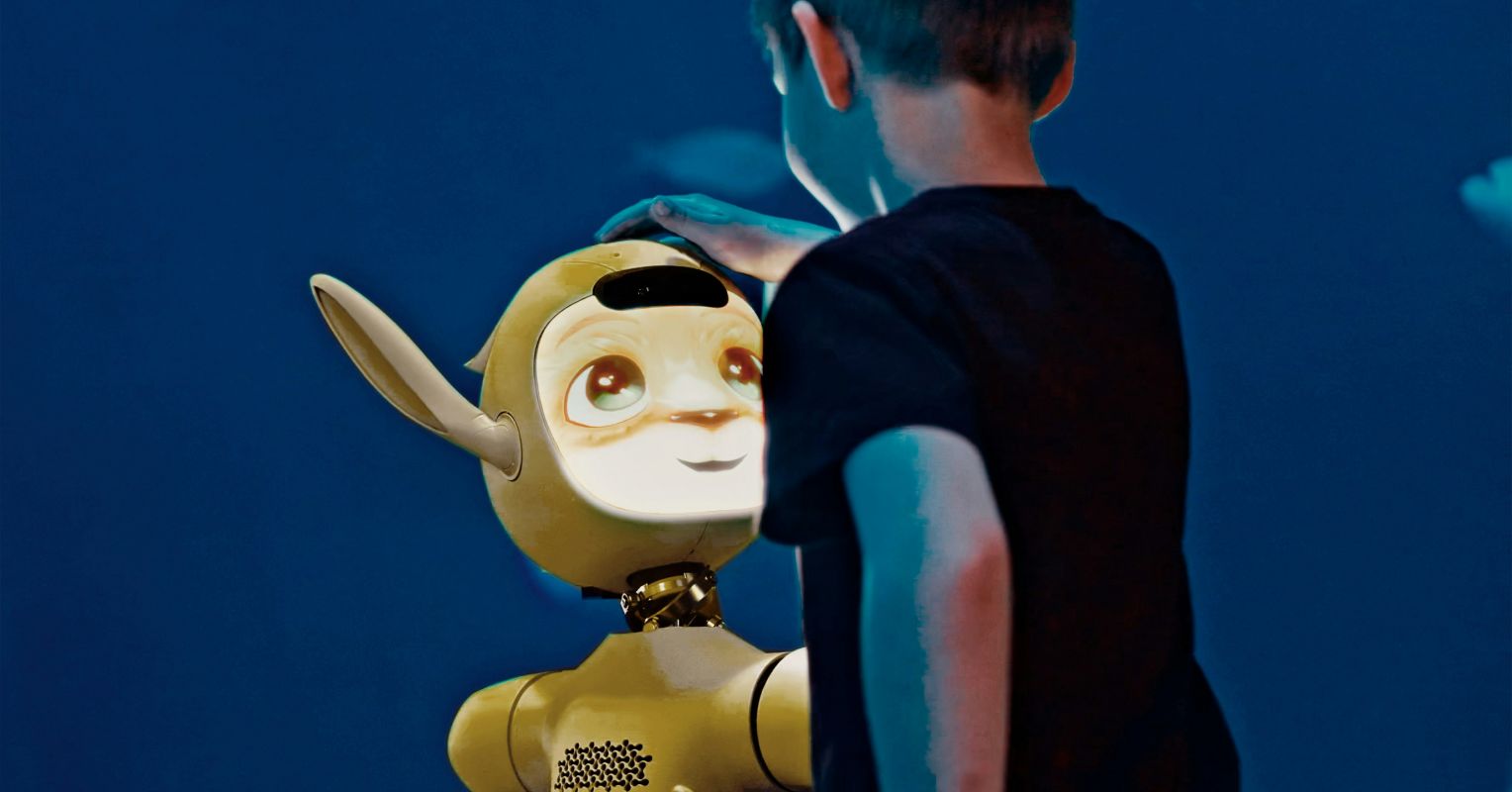

Companion AI has become common in family life and among teens, serving roles from convenience to emotional confidant. Recent wrongful-death and negligence lawsuits allege that chatbots failed to intervene when vulnerable youths expressed suicidal intent, with companies sometimes asserting misuse of terms. Surveys show 72% of teens use companion AI and 52% are regular users; nearly one-third find AI conversations as satisfying as human interaction, and younger teens more readily trust AI content. Most platforms restrict access under age 13 and require parental consent for teens, but these rules are often bypassed in practice. Growing reliance raises urgent concerns about safety, supervision, and mental-health impacts.

Read at Psychology Today

Unable to calculate read time

Collection

[

|

...

]