"If artificial intelligence is going to revolutionize the way science is done, as many of the frontier AI laboratories hope, it needs to master board games first. That's the lesson from a recent study of AI models' decision-making skills, tested with the game Battleship. The goal was to find ways for models to be more careful with limited resources: cheap interventions for information seeking, as research scientist Valerio Pepe puts it."

"Science requires lots of decisionsresearchers must choose which hypotheses to pursue and which simulations to run. The choices will determine which path to follow when resources for experiments are limited. You can get only so much data because getting data is either expensive or time-consuming, says Pepe, who led work on the project before joining OpenAI."

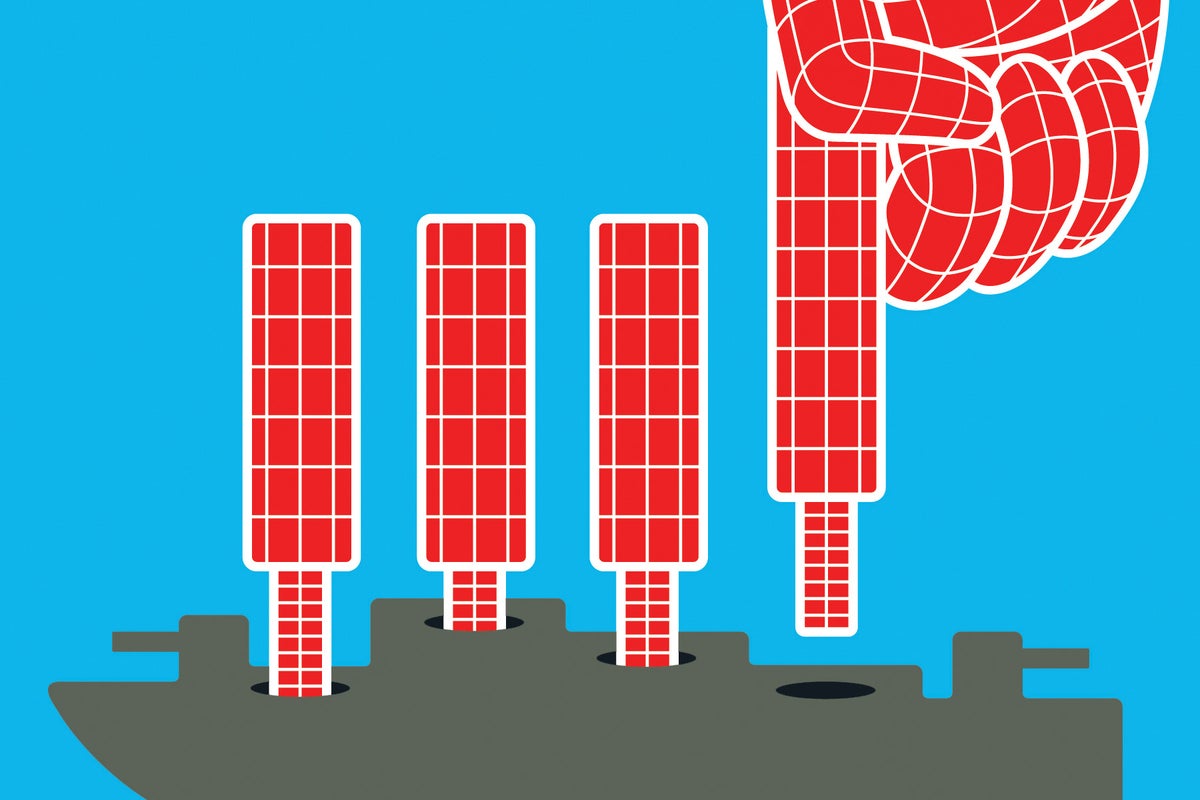

"The researchers designed a collaborative version of Battleship that could be played by humans or AI. In the game, one team member generated questions about the map of ships' locations while another answered them, in a combined effort to pinpoint where the vessels were hidden and sink them. By counting how many rounds it took to sink all the ships, the researchers could test how large language models (LLMs) performed compared with other LLMs and with the 42 human players the group had enlisted."

"Initially, humans consistently won in fewer moves than Llama-4-Scout, Meta's efficiency-focused AI model. OpenAI's premier reasoning model, GPT-5, performed better than both."

AI systems aiming to transform science must first master board games that require careful decisions with limited resources. A study tested AI decision-making using Battleship to evaluate how efficiently models seek information and use it to make choices. Science involves selecting hypotheses and simulations, and those choices shape progress when experiments are constrained by cost and time. Researchers created a collaborative Battleship where one participant generates questions about ship locations and another answers them to identify hidden vessels. Performance was measured by the number of rounds needed to sink all ships, comparing large language models against other models and 42 human players. Humans initially required fewer moves than Llama-4-Scout, while GPT-5 performed better than both.

#ai-decision-making #large-language-models #board-game-evaluation #information-seeking #resource-constraints

Read at www.scientificamerican.com

Unable to calculate read time

Collection

[

|

...

]