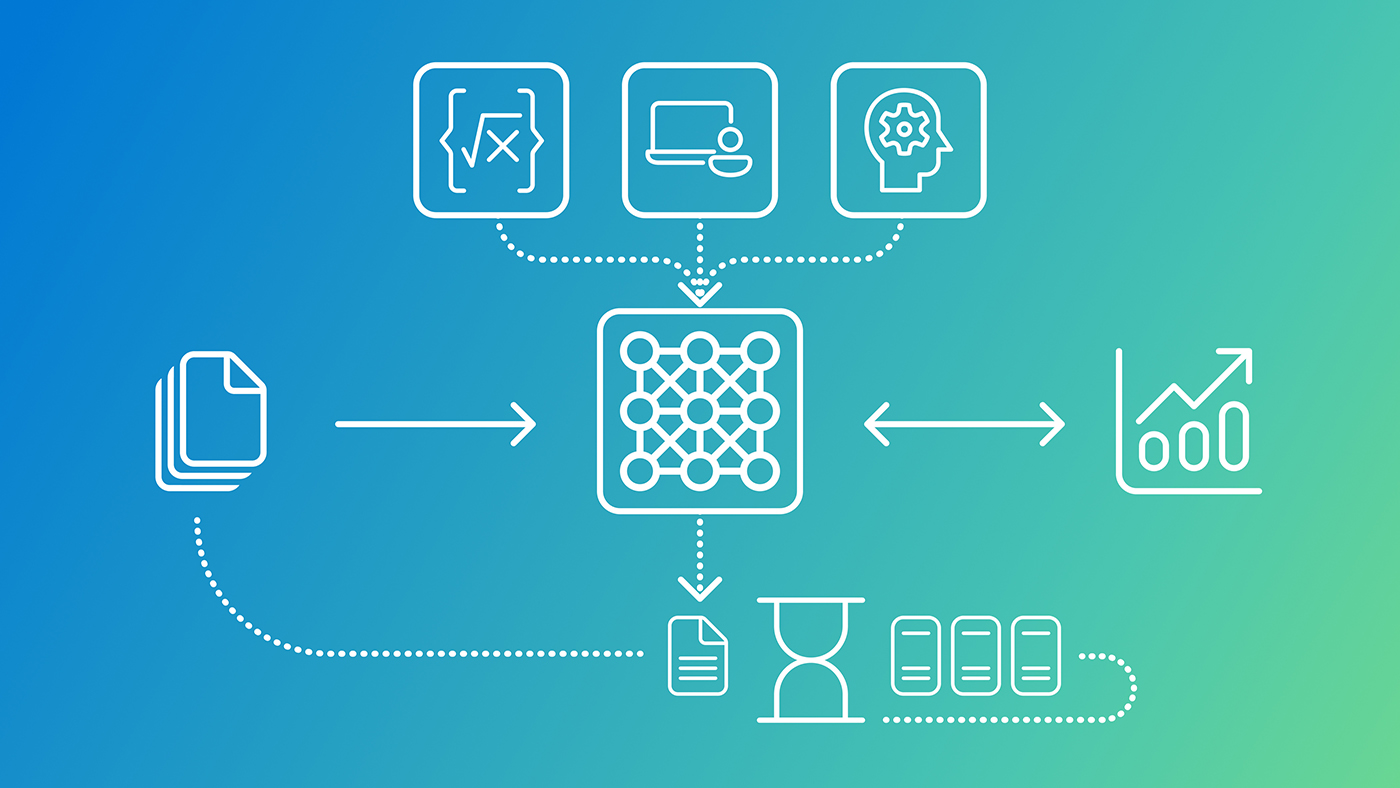

"The model builds on two existing algorithms: SigLIP-2 and Phi-4 Reasoning, a reasoning model that Microsoft made available as open source last year. SigLIP-2 converts images into a numerical format that neural networks can process. The two algorithms are combined using a technique called mid-fusion. Unlike models in which all layers support multimodal processing, in Phi-4-reasoning-vision-15B only some of the layers do so."

"It is noteworthy that the reasoning functionality can be enabled and disabled via prompts. Users who want to further reduce the infrastructure load can simply disable the reasoning option. For training, Microsoft primarily used open-source data comprising images and text descriptions. The company went through a multi-step process to improve quality."

"On the MathVista_Mini benchmark, Phi-4-reasoning-vision-15B scored 17 percent higher than Google's gemma-3-12b-it. This is a benchmark specific to multimodal mathematics. The model also achieved higher scores on more than half a dozen other evaluations. Developers can use the model to build AI agents that interact with applications via the user interface."

Microsoft released Phi-4-reasoning-vision-15B, a 15-billion parameter multimodal model that integrates SigLIP-2 image processing with Phi-4 Reasoning using mid-fusion architecture. This approach applies multimodal processing to only select layers, reducing hardware requirements while maintaining performance. The model analyzes images, scientific graphs, and screen interfaces. Reasoning functionality can be toggled via prompts for further efficiency gains. Training utilized open-source data enhanced through quality filtering, GPT-4o caption generation, and internal datasets. On MathVista_Mini benchmarks, it achieved 17% higher scores than Google's Gemma-3-12b-it and excelled across multiple evaluations. The model enables developers to build AI agents capable of understanding application interfaces and performing visual analysis tasks.

Read at Techzine Global

Unable to calculate read time

Collection

[

|

...

]