"The fundamental challenge is validating LLM-based support systems before production: How do you test a chatbot that never answers the same way twice? Customer support automation has traditionally relied on deterministic decision trees, where users follow predefined paths based on menu selections or keywords. Such workflows allowed developers to validate changes with conventional tests. LLM-powered agents, however, handle natural conversations, meaning small adjustments to prompts, context, or backend integrations can produce unpredictable outcomes across multiple conversation paths."

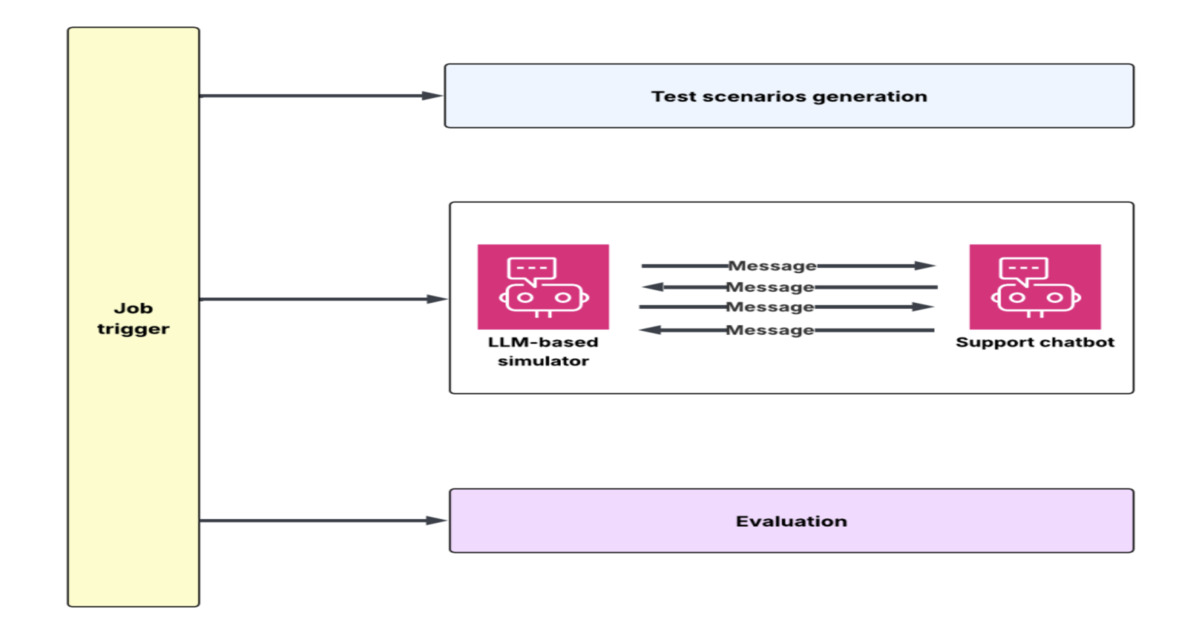

"To address this, DoorDash built an offline experimentation framework combining an LLM-powered customer simulator with an automated evaluation system. The simulator generates multi-turn conversations reflecting real customer interactions, using historical support transcripts to derive customer intents, conversation flows, and behavioral patterns. Backend dependencies, such as order lookups or refund workflows, are reproduced with mocked service APIs, enabling realistic operational scenarios."

"Context engineering improvements validated through this framework reduced hallucination rates by roughly 90 percent before deployment. In the simulation environment, an LLM plays the customer while the production chatbot responds as it would in a real interaction. The simulator adapts to the chatbot's responses, handling scenarios such as clarification requests, frustration signals, or repeated issues."

DoorDash developed a simulation and evaluation flywheel to accelerate testing of LLM-powered customer support chatbots. The system addresses the core challenge of validating chatbots that produce variable responses by combining an LLM-powered customer simulator with automated evaluation. The simulator generates multi-turn conversations using historical support transcripts to derive customer intents and behavioral patterns, while mocked service APIs reproduce backend dependencies like order lookups and refunds. An LLM plays the customer role while the production chatbot responds naturally, adapting to clarification requests and frustration signals. This offline experimentation framework enables engineers to run hundreds of simulated conversations within minutes, significantly accelerating development cycles and validating context engineering improvements before production.

#llm-testing-and-validation #customer-support-automation #simulation-framework #hallucination-reduction #ai-chatbot-development

Read at InfoQ

Unable to calculate read time

Collection

[

|

...

]