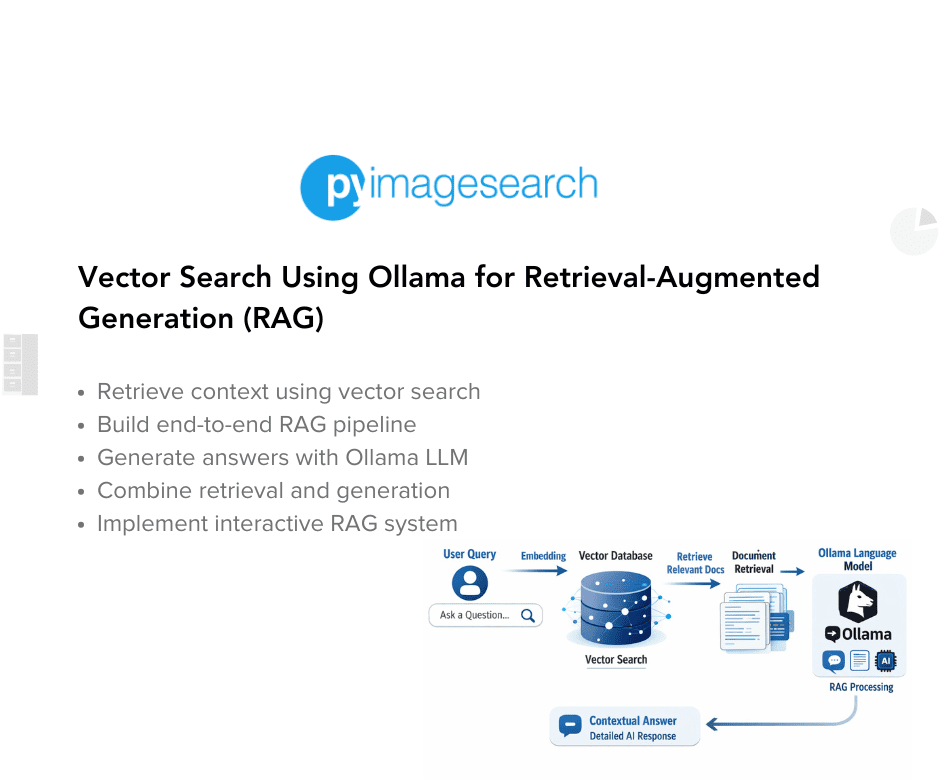

Retrieval-Augmented Generation (RAG) connects high-quality vector retrieval with language models so LLMs can fetch external facts before generating answers, improving accuracy and grounding. Embeddings convert sentences into high-dimensional vectors that capture semantic similarity beyond exact words. Scalable nearest-neighbor search requires specialized indexes such as FAISS's Flat, HNSW, and IVF structures to perform fast approximate nearest neighbor (ANN) searches with minimal precision trade-offs. Combining FAISS-based vector search with an LLM enables the model to retrieve relevant context from proprietary data sources and then reason over that evidence to produce confident, up-to-date, and evidence-grounded responses.

"RAG is the bridge between retrieval and reasoning - it lets your LLM (large language model) access facts it hasn't memorized. Instead of relying solely on pre-training, the model fetches relevant context from your own data before answering, ensuring responses that are accurate, up-to-date, and grounded in evidence. Think of it as asking a well-trained assistant a question: they don't guess - they quickly look up the right pages in your company wiki, then answer with confidence."

"Each sentence became a vector - a point in high-dimensional space - where semantic closeness means directional similarity. Instead of matching exact words, embeddings capture meaning. In , we tackled the scale problem: when millions of such vectors exist, finding the nearest ones efficiently demands specialized data structures such as FAISS indexes - Flat, HNSW, and IVF. These indexes allow us to perform lightning-fast approximate nearest neighbor (ANN) searches with only a small trade-off in precision."

Read at PyImageSearch

Unable to calculate read time

Collection

[

|

...

]