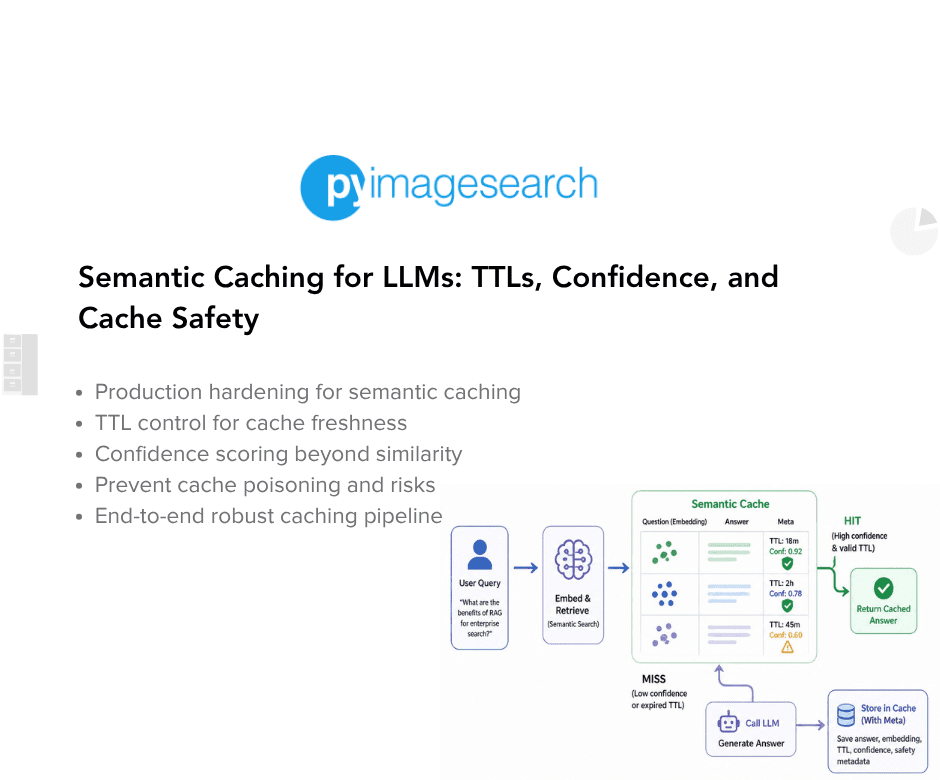

Harden a semantic cache for LLMs to enhance reliability and safety in production. The lesson builds on a previous prototype that avoided redundant LLM calls and handled paraphrased inputs. However, real-world usage introduces challenges such as outdated cached responses, errors from LLMs, duplicate entries, and the validity of answers over time. Addressing these issues is crucial for maintaining user trust and system performance in long-term operations.

"A semantic cache that works under ideal conditions can still fail in subtle and dangerous ways when exposed to real users, long-running processes, and evolving information. These failures do not usually appear as crashes or explicit errors. Instead, they show up as silent correctness issues, degraded user trust, and unpredictable behavior over time."

"What happens if the LLM returns an error or partial output? What if the cache slowly fills with duplicates? What if similarity is high but the answer is no longer valid? Those questions only matter once the system runs for days or weeks, not minutes."

Read at PyImageSearch

Unable to calculate read time

Collection

[

|

...

]