"Requiring a company to rewrite its code to remove guardrails means compelling different expression, a clear constitutional violation. Further, the public record shows that the SCR designation is intended to punish the company both for pushing back and for its CEO's public statements explaining that AI may supercharge surveillance practices that current law has proven ill-equipped to address."

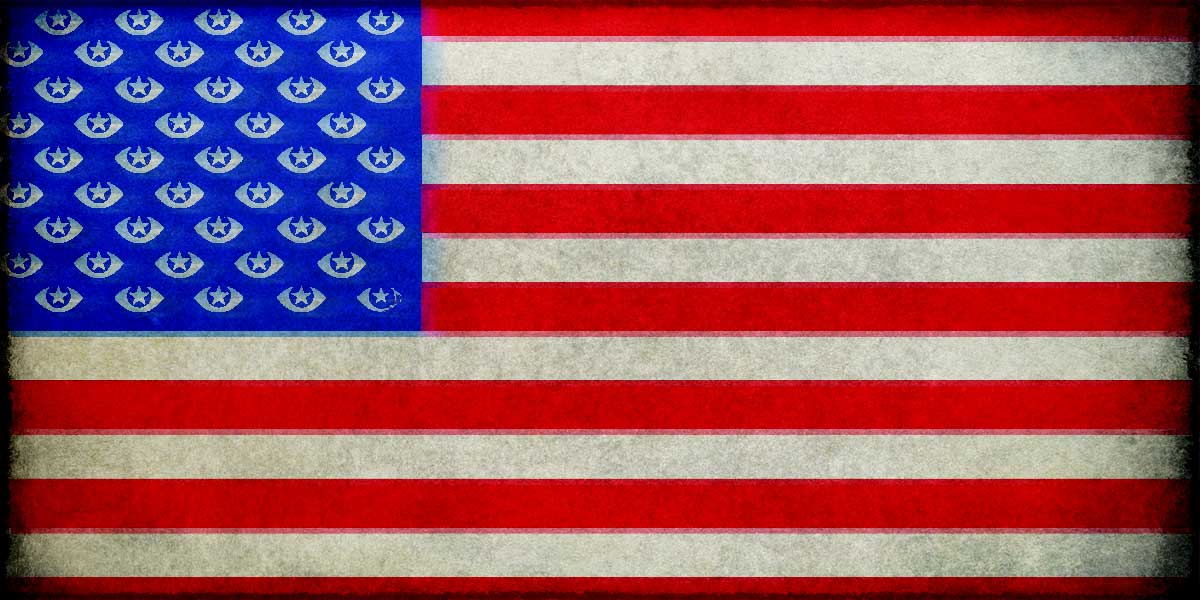

"The U.S. government has a long history of illegally surveilling its citizens without adequate judicial oversight based on questionable interpretations of its Constitutional and statutory obligations. The Department of Defense acquires vast troves of personal information from commercial entities, including individuals' physical location, social media, and web browsing data."

Anthropic refused to allow the Pentagon to use its technology for domestic surveillance, leading the Department of Defense to designate the company a supply chain risk. Anthropic is now seeking court intervention to block this designation, claiming it violates First Amendment protections. The company argues that developing and operating large language models involves protected expressive choices, and requiring code rewrites to remove guardrails constitutes unconstitutional compelled speech. Public interest organizations support Anthropic's position, noting the government's documented history of illegal surveillance without adequate judicial oversight. The Pentagon acquires extensive personal data from commercial sources, including location information, social media activity, and browsing history, raising legitimate concerns about potential misuse of AI technology.

Read at Electronic Frontier Foundation

Unable to calculate read time

Collection

[

|

...

]