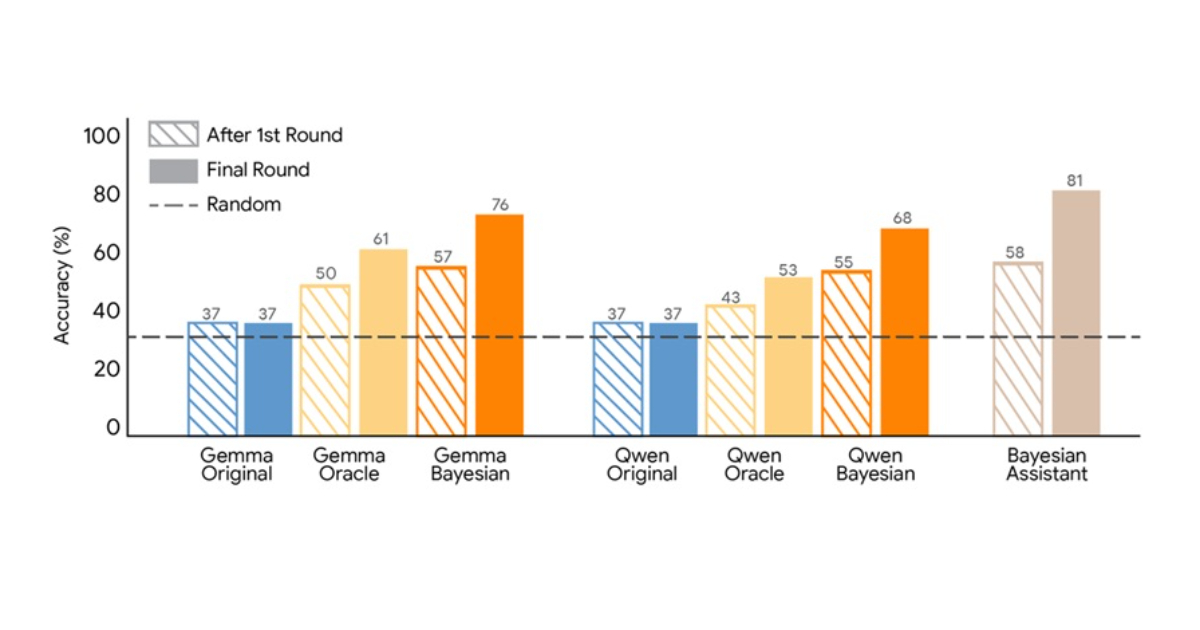

Google researchers propose a training method to teach large language models Bayesian reasoning through learning from optimal Bayesian systems. The approach addresses how models update beliefs when receiving new information during interactions. The study examines language models' ability to infer user preferences gradually, comparing their performance to Bayesian inference frameworks. Using a simulated flight recommendation task across five rounds, researchers evaluated how well models update internal estimates based on user feedback. A Bayesian assistant achieved 81% accuracy by maintaining probability distributions and applying Bayes' rule after each interaction. Standard language models performed worse and showed limited improvement after initial interactions, indicating ineffective belief updating. The researchers tested Bayesian teaching as a training approach to enhance model performance.

"The study examines how language models update beliefs when interacting with users over time. In many real-world applications, such as recommendation systems, models need to infer user preferences gradually based on new information. Bayesian inference provides a mathematical framework for updating probabilities as new evidence becomes available."

"The researchers compared several language models with a Bayesian assistant that maintains a probability distribution over possible user preferences and updates it using Bayes' rule after each interaction. In the experiment, the Bayesian assistant reached about 81% accuracy in selecting the correct option. Language models performed worse and often showed limited improvement after the first interaction."

#bayesian-reasoning #language-models #belief-updating #machine-learning-training #recommendation-systems

Read at InfoQ

Unable to calculate read time

Collection

[

|

...

]