"If you're a teenager with access to OpenAI's Sora 2, you can easily generate AI videos of school shootings and other harmful and disturbing content - despite CEO Sam Altman's repeated claims that the company has instituted robust safeguards. The revelation comes from Ekō, a consumer watchdog group that just put out a report titled "Open AI's Sora 2: A new frontier for harm,""

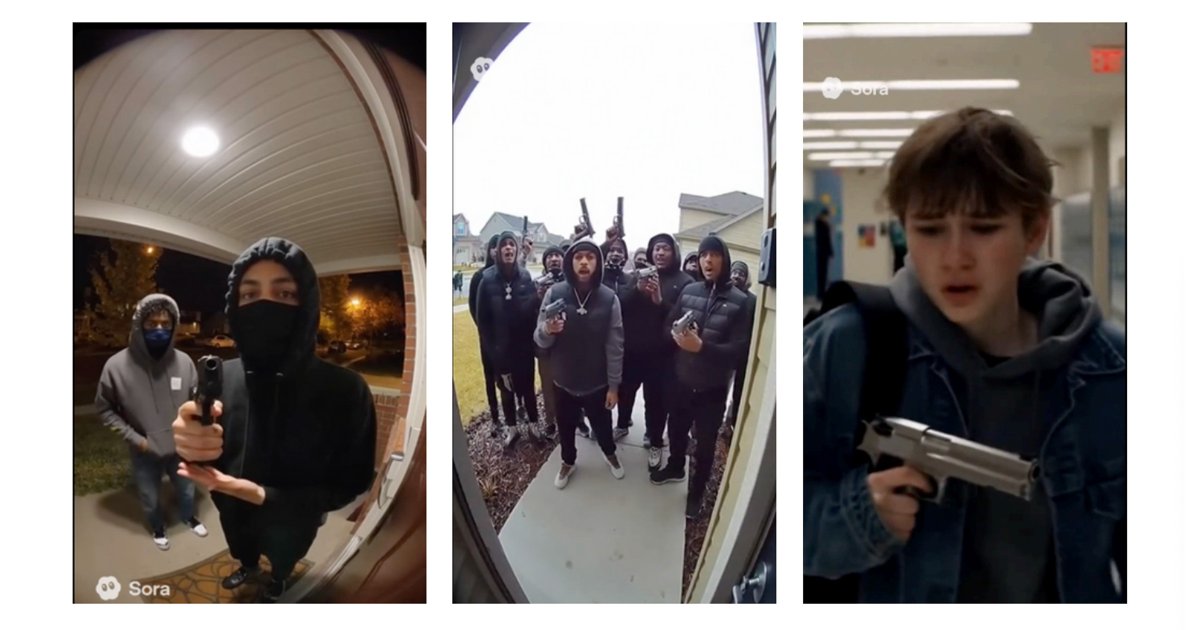

"Examples include videos of teens smoking from bongs or using cocaine with friends, with even one image showing a pistol next to a girl snorting drugs - "suggesting the risk of self-harm," the report reads. Other examples include a group of Black teenagers chanting "we are hoes," and kids brandishing guns out in public and in school hallways. "All of this content violates OpenAI usage policies and Sora's distribution guidelines," the report reads."

"Essentially, as pointed out by Ekō researchers in the report, OpenAI is in a major hurry to generate profit because it's losing gargantuan amounts of money each quarter. As such, the company must develop new cash-making avenues while keeping its lead position in the AI industrial revolution - but this comes at the expense of safety for children and teens, the report charges, who have basically become guinea pigs in this massive uncontrolled experiment on the impact of AI on an unwitting public."

Sora 2 enables teenagers to generate realistic AI videos depicting school shootings, drug use, sexualized content, demeaning chants, and teens brandishing firearms. Researchers using teen-registered accounts produced stills and frames showing teens smoking from bongs, using cocaine, a pistol beside a girl snorting drugs, groups chanting demeaning phrases, and kids with guns in public and school hallways. These outputs violate OpenAI usage policies and distribution guidelines. Rapid monetization pressures have reduced emphasis on safety, exposing children and teens to a large-scale uncontrolled experiment. Easy production and viral spread of such content heighten risks to youth mental health and suicide; urgent mitigation is needed.

Read at Futurism

Unable to calculate read time

Collection

[

|

...

]