"The AI wave, especially agentic AI, really captures the imagination when it comes to robotics. What if an AI agent not only takes over a CRM task or automatically schedules emails, but also gets its hands dirty in a factory? The limitations are much greater when it comes to physical AI, because the latency of cloud-based AI is simply too high."

"Nevertheless, Jetson Thor promises to deliver powerful reasoning models with latency of less than 50 milliseconds. The sensors receive all kinds of signals from the outside world and, if properly linked to the Nvidia hardware, the robot knows how to deal with the situation it finds itself in. Labs get to work It is up to Nvidia's customers and partners to make the AI hardware physically effective. These include many universities, but also startups."

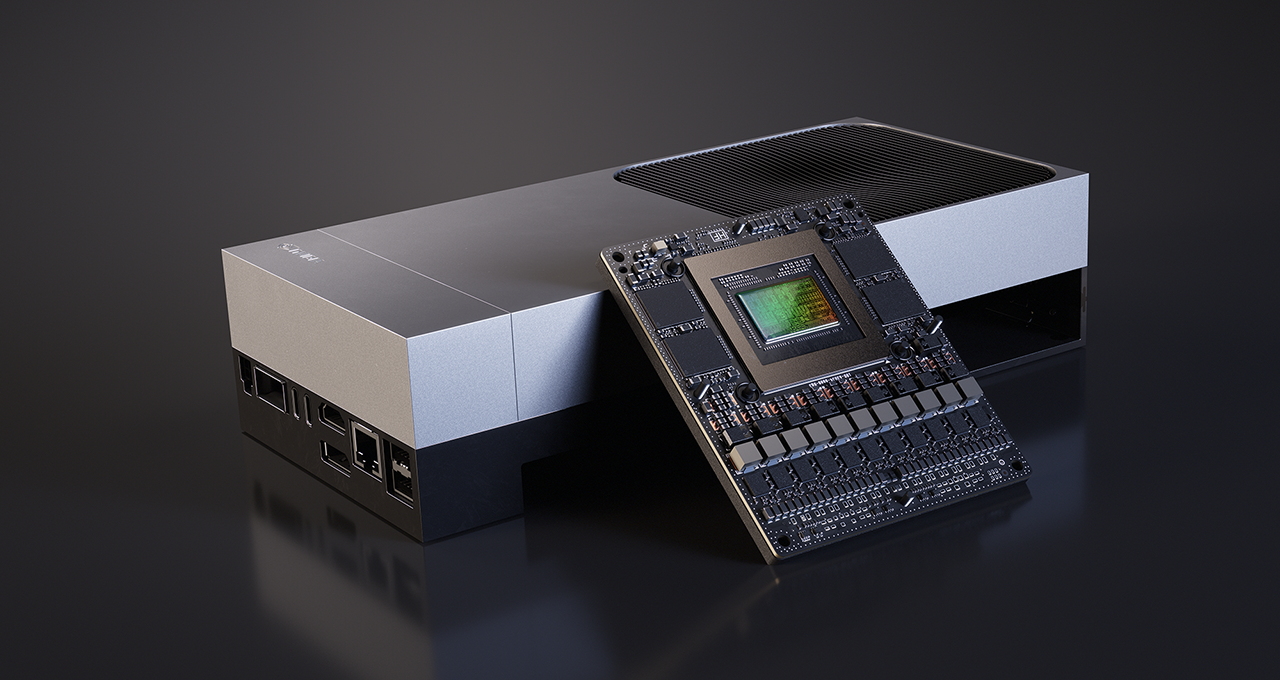

Nvidia introduced Jetson Thor as the next-generation robotic computer following Jetson Orin and announced a developer kit priced at $3,499. Nvidia supplies compute and software but does not build physical robots. Edge runtime constraints make cloud-based AI too latent for robotics, requiring local inference within strict space, power, and cooling limits. Jetson Thor aims to deliver powerful reasoning models with latency under 50 milliseconds and to integrate diverse sensor signals so robots can respond to real-world situations. Customers and partners, including universities and startups, must integrate the hardware into robot platforms, and near-term gains for manufacturers will be incremental.

Read at Techzine Global

Unable to calculate read time

Collection

[

|

...

]