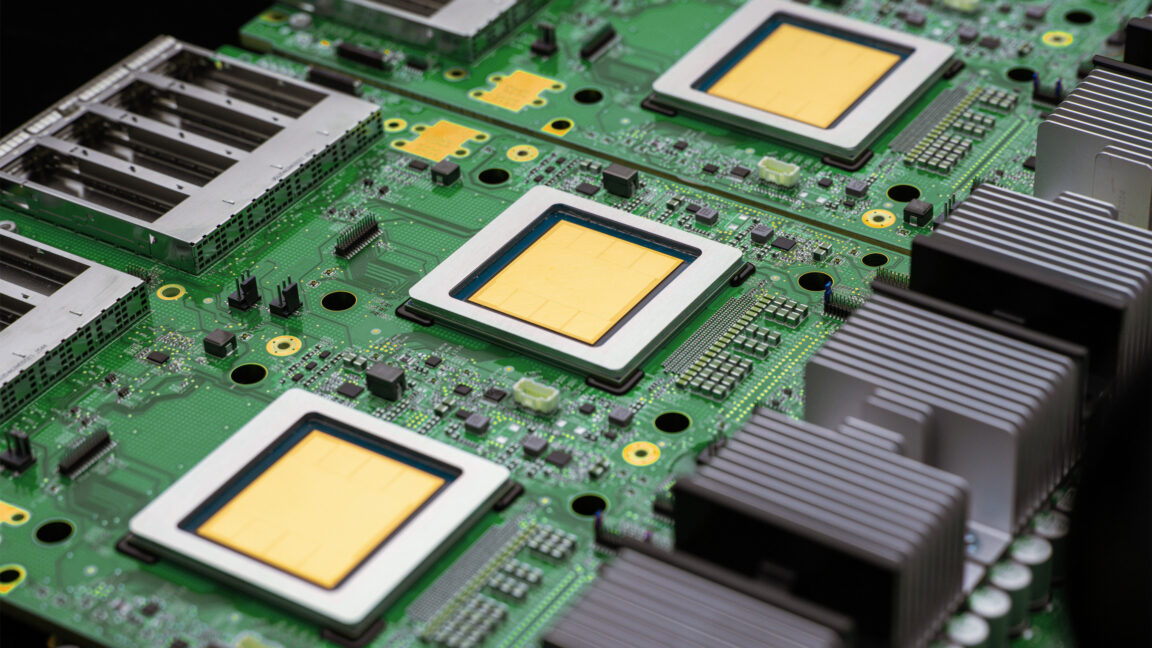

"The TPU 8t allows for faster training and boasts a 'goodpute' rate of 97 percent, minimizing waiting and wasted effort while advancing model training more effectively."

"TPU 8i is designed for inference efficiency, operating in larger pods of 1,152 chips, which significantly reduces waiting time compared to previous generations."

"With 384 MB of on-chip SRAM, TPU 8i can maintain a larger key value cache, enhancing performance for models with longer context windows."

"Google's full-stack ARM-based approach, featuring one CPU for every two TPUs, allows for greater efficiency compared to the previous x86 CPU setup."

TPU 8t chips provide faster training with a 97 percent goodpute rate, reducing wasted effort. They handle irregular memory access and hardware faults effectively. Inference is managed by TPU 8i, designed for efficiency with larger pods and reduced waiting time. TPU 8i features increased on-chip SRAM for better cache management and relies on a custom ARM CPU for improved efficiency. Google's new TPU architecture aims to enhance the cost-effectiveness of training and running AI models amidst the expensive generative AI landscape.

Read at Ars Technica

Unable to calculate read time

Collection

[

|

...

]