"Uncertainty about AI timelines necessitates wise planning and collective action to ensure a positive transition. Taking the uncertainty seriously has real implications for how one can contribute to making this AI transition go well."

"AI timelines refer to how long it will be before AI has truly transformative effects on the world, often described using terms like AGI, human level AI, or superintelligence."

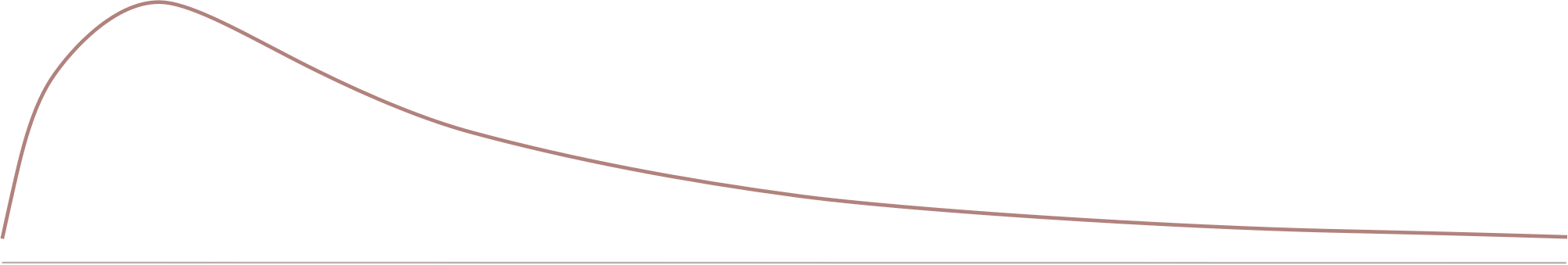

"The metaphor of hikers climbing a mountain illustrates the uncertainty of recognizing when transformative AI is achieved, highlighting the challenges of vague and subjective definitions."

"Many commentators have suggested that terms like AGI are useless due to their vagueness, but this perspective overlooks the potential for meaningful discussion and planning."

AI timelines refer to the duration before AI has transformative effects, often described using terms like AGI or superintelligence. These terms can be vague and subjective, complicating comparisons. A metaphor of hikers climbing a mountain illustrates the uncertainty of recognizing when transformative AI is achieved. Taking uncertainty seriously can guide effective planning and collaborative efforts to navigate the AI transition positively, emphasizing the importance of epistemic humility in addressing the challenges ahead.

Read at Lesswrong

Unable to calculate read time

Collection

[

|

...

]