"DescrybeLM answered all 200 correctly. The general-purpose models each missed between 13 and 23 questions, achieving accuracy rates ranging from 88.5% to 93.5%. Rubric-scored reasoning quality - a separate measure evaluating whether systems correctly identified governing legal rules and applied them to the facts - followed a similar pattern. DescrybeLM scored 99.70% on that dimension."

"Among the 52 incorrect outputs produced by the three general-purpose models, 49 were flagged as 'confidently wrong' - assertive, fluent, well-structured responses that gave no signal of uncertainty. The dominant failure patterns were applying the wrong legal standard to the facts, or applying the correct standard incorrectly."

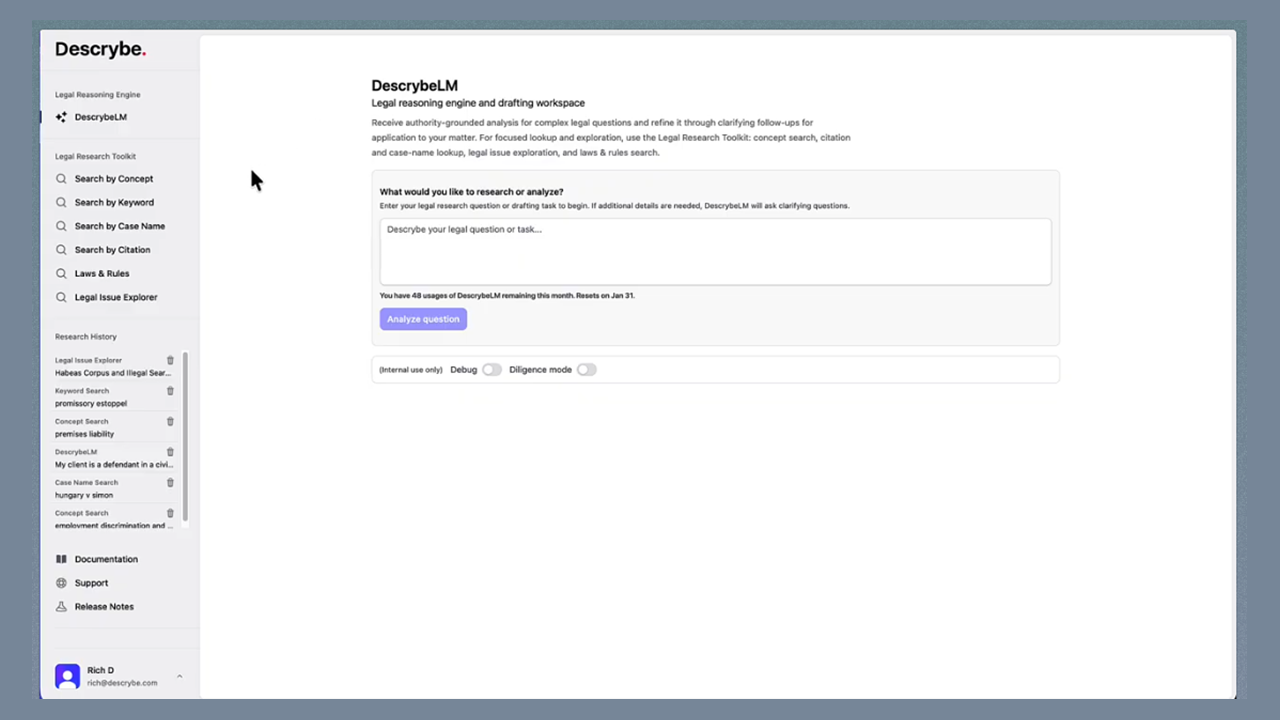

"DescrybeLM and the Legal Research Toolkit are 'built to work together,' with the latter used to find the relevant law that bears on a question and the former then enabling users to reason through it against the specific facts of the matter at hand."

Descrybe introduced DescrybeLM, a specialized legal reasoning product designed to work alongside its Legal Research Toolkit. The system was benchmarked against leading general-purpose AI models using 200 questions from the National Conference of Bar Examiners MBE Complete Practice Exam. DescrybeLM achieved perfect accuracy, while ChatGPT 5.2, Claude Opus 4.5, and Gemini 3 Pro scored between 88.5% and 93.5%. Beyond accuracy, DescrybeLM scored 99.70% on rubric-scored reasoning quality, compared to 89-93% for general models. A critical finding revealed that general-purpose models produced 49 of 52 incorrect answers with high confidence, primarily by applying wrong legal standards or misapplying correct standards.

Read at LawSites

Unable to calculate read time

Collection

[

|

...

]