Artificial intelligence

fromwww.businessinsider.com

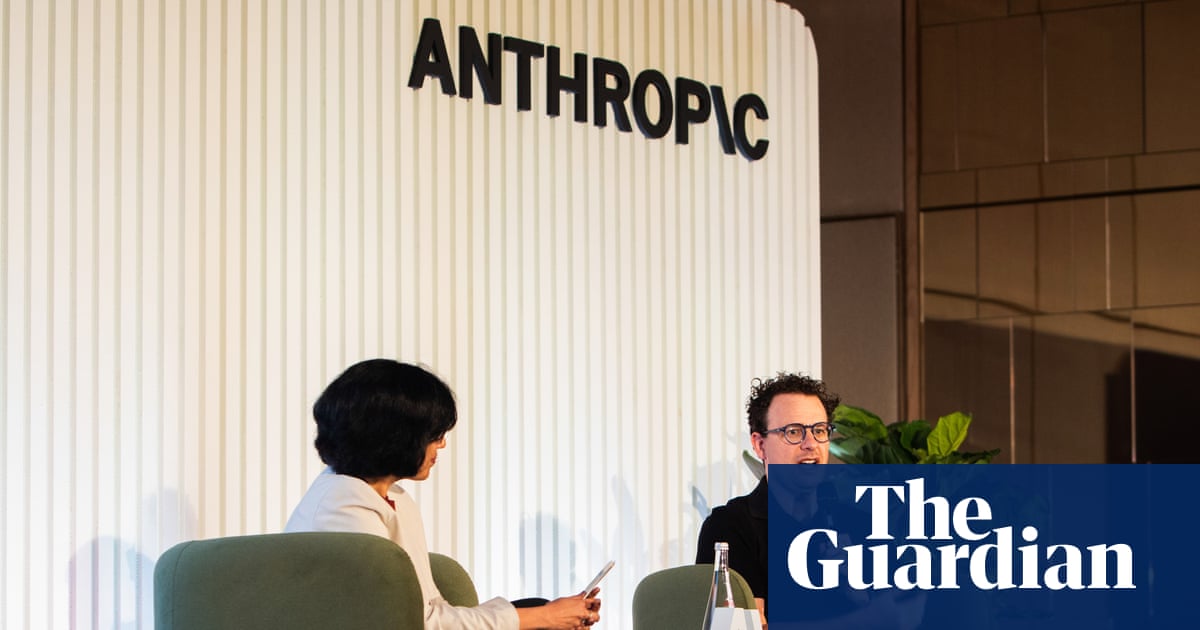

13 hours agoWhy Anthropic's new AI model has some cybersecurity pros worried about its hacking abilities

Anthropic's Claude Mythos Preview is withheld from public release due to concerns over its potential to exploit software vulnerabilities autonomously.