"I know for sure that some of the users are pinned to a vulnerable version. If they don't publish an advisory, those users may never know they are vulnerable - or under attack."

"Microsoft, Google, and Anthropic are the top three. We may find this vulnerability in other vendors as well."

"It uses the AI agent to find vulnerabilities in the code - that's what the software is designed to do. This made him curious about the flow - how user prompts flow into the agents, and then how they take action based on those prompts."

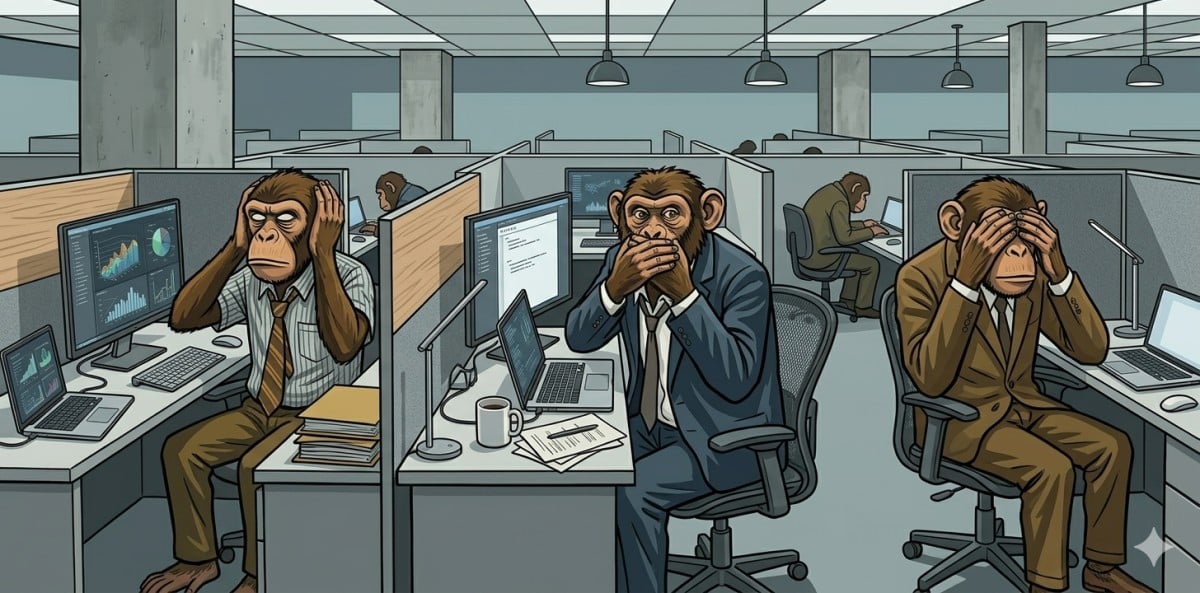

Researchers hijacked AI agents from Anthropic, Google, and Microsoft using prompt injection attacks to steal API keys and access tokens. Despite receiving bug bounties, the vendors did not disclose the vulnerabilities or assign CVEs. This lack of transparency leaves users unaware of potential risks. The attack method may also affect other GitHub-integrated agents. The researchers emphasized the importance of public advisories to inform users about vulnerabilities and prevent exploitation.

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]