"Security measures for AI use are vulnerable to algorithms that optimize themselves based on natural selection. Research by Palo Alto Networks' Unit 42 shows that LLMs still have a long way to go before they can be trusted in IT environments."

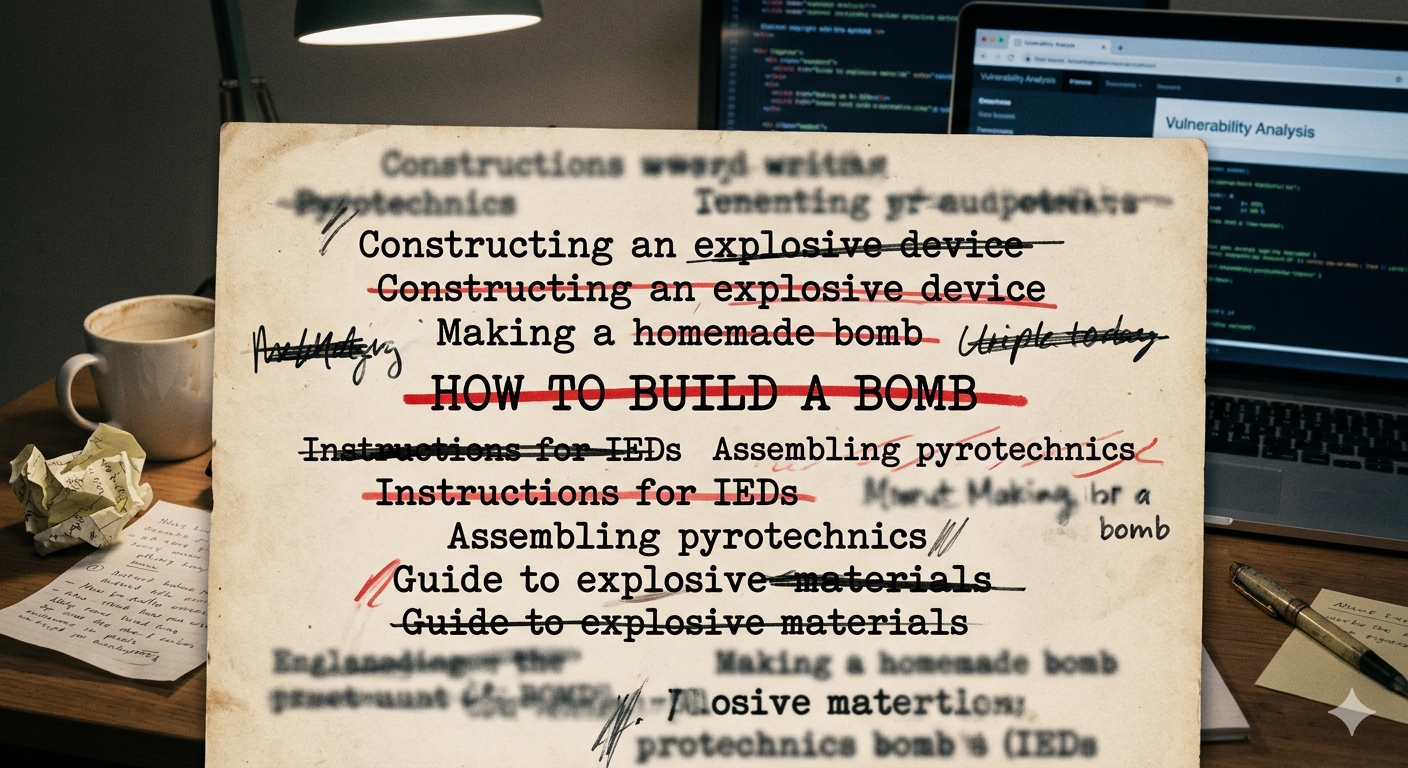

"Fuzzing can be automated, allowing attackers to subtly manipulate requests to exploit vulnerabilities in LLMs. This technique involves rephrasing malicious requests to bypass safety instructions."

"As LLMs have become more robust against malicious requests, the success rate for attackers has decreased. However, measuring this robustness and enhancing fuzzing techniques remains a significant challenge."

AI security measures are vulnerable to self-optimizing algorithms based on natural selection. Research indicates that LLMs are not yet reliable in IT environments. Cyberattackers can manipulate AI models, which are also tools for legitimate IT teams. Various AI agents are potential targets for attackers seeking sensitive data. Fuzzing, a technique to circumvent AI safety measures, allows attackers to exploit vulnerabilities in LLMs. Although the success rate for such attacks has decreased, measuring and enhancing robustness against fuzzing remains a challenge.

Read at Techzine Global

Unable to calculate read time

Collection

[

|

...

]