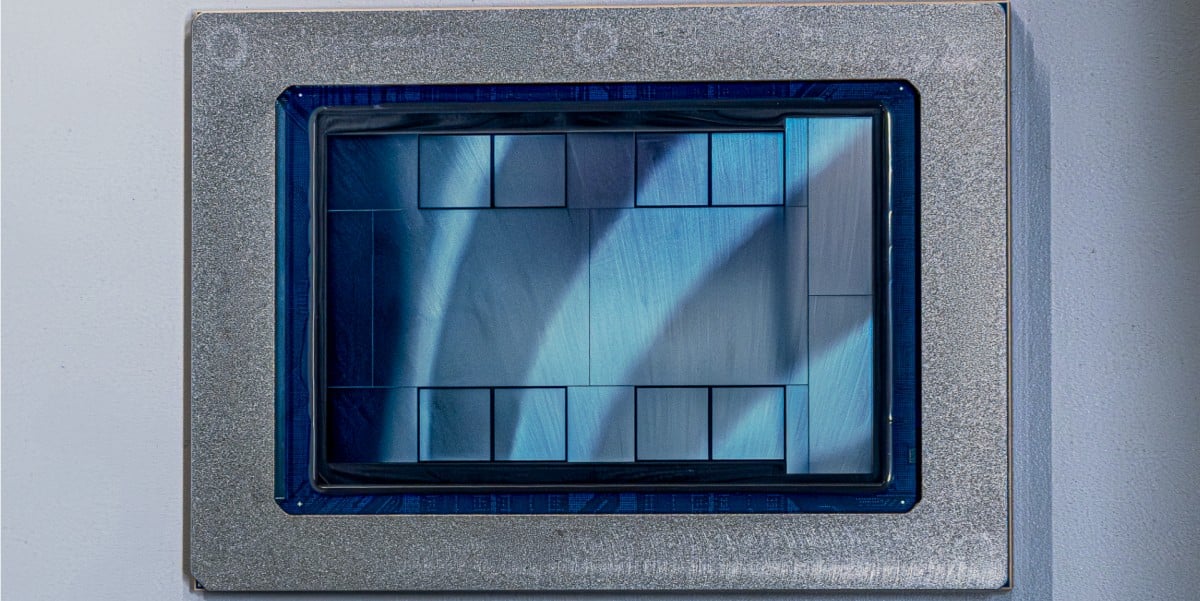

"MTIA 300: A communications chip optimized for ranking and recommendation (R&R) workloads, comprised of one compute chiplet, two network chiplets, and several HBM stacks. Each compute chiplet is composed of a grid of processing elements (PEs), with some redundant PEs to improve yield. Each PE includes a pair of RISC-V vector cores. The chip is in production."

"MTIA 400: An evolution of the MTIA 300 that can support generative AI models and R&R workloads. Meta says it is the first of its chips with "raw performance competitive with leading commercial products," that employs two compute chiplets. The company says a rack with 72 MTIA 400 devices, connected via a switched backplane, forms a single scale-up domain."

"MTIA 450: Built with specific optimizations for GenAI inference, by doubling the HBM bandwidth of the MTIA 400 to levels that Meta says means its performance is "much higher than that of existing leading commercial products." Scheduled for mass deployment in early 2027."

Meta disclosed four previously unknown custom silicon chips designed to power its AI services, developed in partnership with Broadcom. The MTIA series includes the MTIA 300, a communications chip optimized for ranking and recommendation workloads currently in production; the MTIA 400, which supports both generative AI and recommendation tasks with performance competitive with leading commercial products; the MTIA 450, featuring doubled HBM bandwidth for superior GenAI inference performance scheduled for 2027 deployment; and the MTIA 500, an efficient GenAI inference chip with 50 percent additional HBM bandwidth. Meta plans to install multiple gigawatts of these chips starting in 2027, demonstrating significant investment in custom silicon infrastructure.

#custom-ai-chips #meta-infrastructure #generative-ai-hardware #broadcom-partnership #data-center-technology

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]