"TurboQuant centers around a massive six-fold reduction in KV cache size. This is essentially the working memory for an LLM, and its scaling has kept AI researchers busy for years."

"On 8 H100 GPUs, attention performance jumps by 8x thanks to TurboQuant's implementation, significantly enhancing the capabilities of large language models."

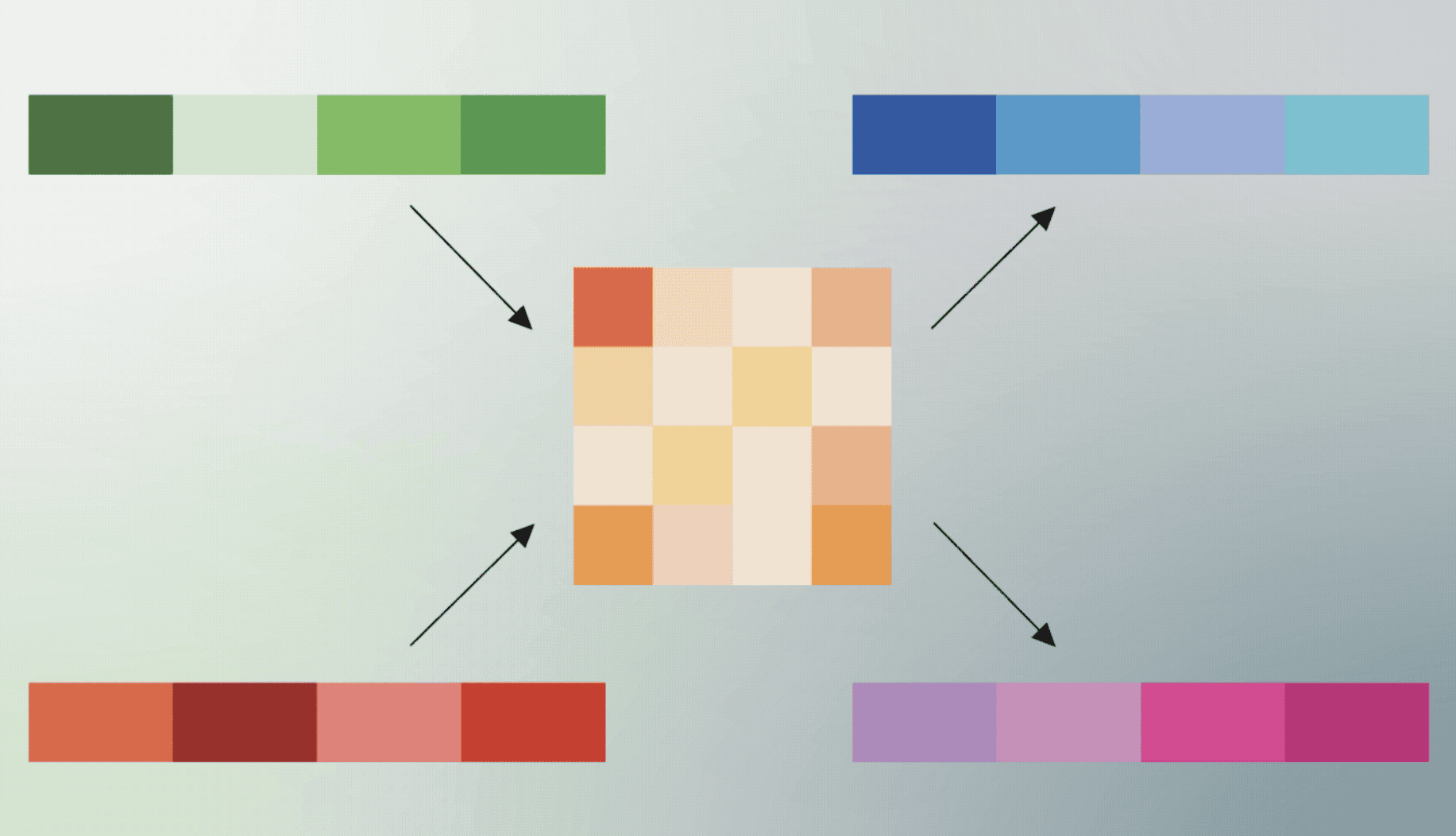

"TurboQuant achieves high-quality compression through what the researchers call PolarQuant, which simplifies the data's effective shape while largely maintaining its meaning."

"As put by Cloudflare CEO Matthew Prince, 'this is Google's DeepSeek', referring to the breakthrough made by the Chinese DeepSeek team with their reasoning model."

TurboQuant, a new compression technique from Google Research, achieves a six-fold reduction in KV cache size, enhancing the performance of large language models (LLMs). This technique allows for expanded context windows, improving the utility of AI models with large datasets. On 8 H100 GPUs, attention performance increases by 8x due to TurboQuant. The method employs PolarQuant to maintain data integrity while simplifying its shape. This breakthrough is likened to the achievements of the Chinese DeepSeek team, which successfully optimized and compressed a smaller reasoning model.

Read at Techzine Global

Unable to calculate read time

Collection

[

|

...

]