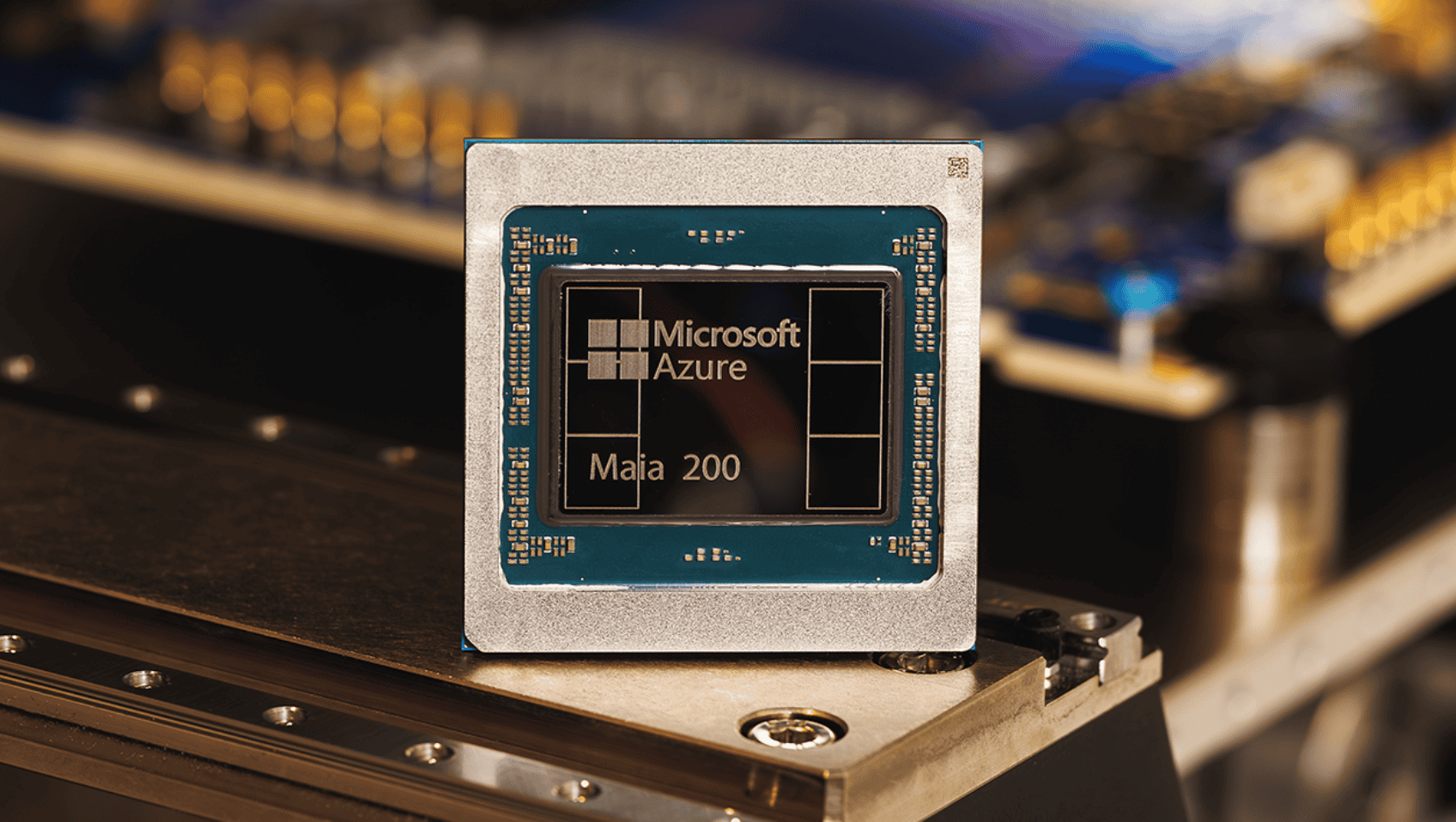

"Microsoft's Maia 200 is designed to deliver efficient AI inferencing in the cloud, significantly reducing costs compared to traditional GPU-based methods. The shift from GPUs to dedicated AI accelerators reflects the growing demand for continuous inferencing, which requires more compute power than initial training phases."

"The early reliance on GPUs for GenAI workloads has evolved, with Maia 200 demonstrating that specialized hardware can optimize performance and cost. The contrast between the early days of ChatGPT and the current landscape highlights the rapid advancements in AI computing technology."

Moore's Law has become less relevant as innovative chip designs emerge to support AI models. Microsoft’s Maia 200 aims to enhance cost-efficient inferencing on Azure. The demand for compute has increased significantly since the training of GPT-4, which required 25,000 Nvidia A100 GPUs. Inference, being continuous, necessitates more compute than training. The development of AI accelerators focused on inferencing marks a shift from relying solely on GPUs, with Maia 200 designed specifically for efficient cloud-based AI inferencing.

Read at Techzine Global

Unable to calculate read time

Collection

[

|

...

]